I am very excited, because assuming what we’re seeing resembles what we actually end up with (with the strong caveat that it works well in fast motion) then it’s absolutely transformative as a technology from day one, let alone as we see it mature over the years.

These aren’t stylistic disagreements or opinion differences.

This is a counter to the absolutely ridiculous levels of braindead YouTube rage bait we’ve been seeing from so many creators, all mindlessly copying each other with the same phrases and slogans, none of which are grounded whatsoever in reality.

I dislike how Minecraft looks also, but that’s a subjective opinion on art style, not an objective claim on photorealism.

If the yardstick we’re using to measure DLSS 5 is one of realism, definition, light accuracy etc. then there is a clear improvement.

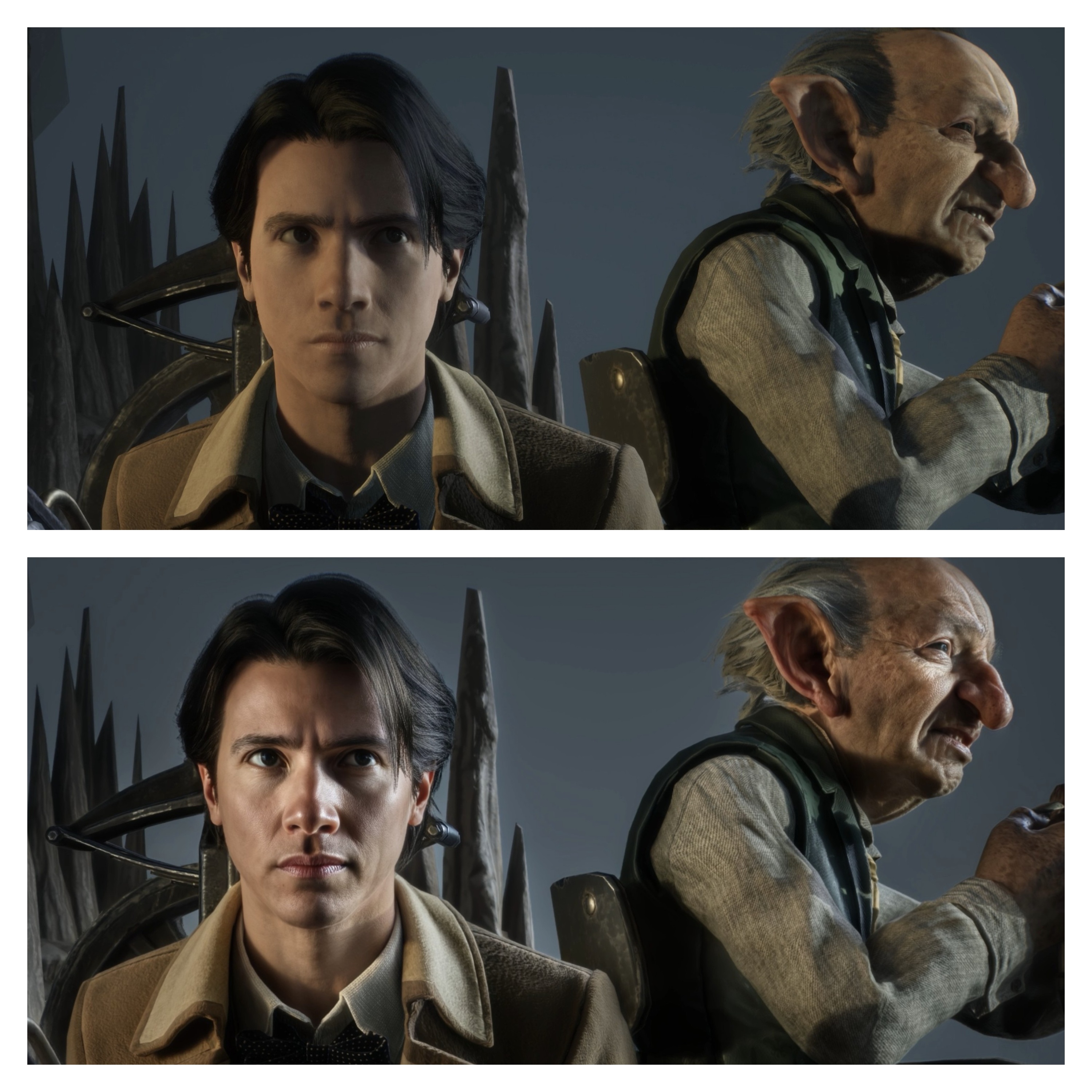

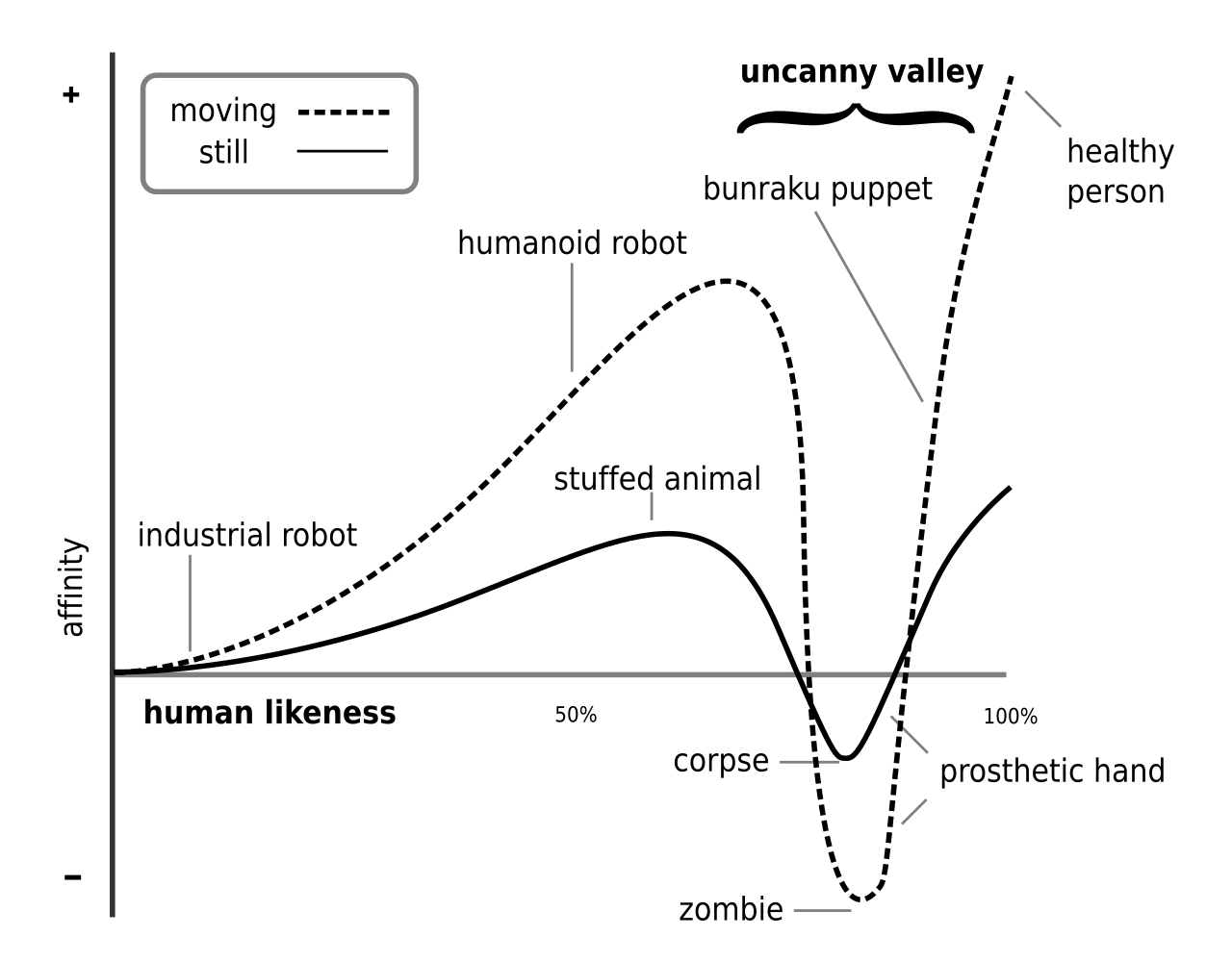

People may dislike the look stylistically, especially if the increase in realism is provoking the “uncanny valley” effect and the psychological discomfort it invokes in some people, but that doesn’t mean it’s not more photorealistic.

Again, this is a conflation of subjective opinions on art style, with things that are objective and measurable in terms of accuracy versus reality.

If you or anyone else truly believe that the off shots look better to your taste based upon style or whatever reason, that’s fine, it’s an opinion. But this relentless, mindlessly repeated claim from so many creators, that they look like less realistic slop is just garbage bandwagon jumping.

Do you have any good examples where you think that the “on” shots look less realistic than the “off” shots? I’ve asked repeatedly and so far no one’s made a convincing case.

If people feel so strongly that this is ugly, unrealistic, AI slop that makes things look worse, then shouldn’t it be fairly easy for them to provide side-by-side shots or clips that clearly demonstrate that to be the case?

I think the fact that people haven’t been forthcoming with examples speaks volumes.

Anyway, for those who are interested, here’s a lot more hands on footage that I’ve not seen elsewhere, that includes some very interesting in motion examples of the technology.