Associate

Triggered by the launch of Microsoft's Xbox One S -- a revision of the prior Xbox One console which features a slimmer form-factor, 4K Blu Ray playback, 4K game upscaling, HDR support and an overclocked processor – High Dynamic Range – also known as HDR, is set to be the next step forward in display technology.

In this article I will provide an explanation as to what HDR is, how it works, whether or not it's an important aspect to gaming and if it's a superior visual improvement than going to 4K. Think of this article as a shortcut explanation that does away with the unneeded and vastly detailed information. While the technology is largely limited to TVs as opposed to gaming monitors which are largely preferred due to the high response times and choices for panel technology, HDR will be arriving on PC displays – with graphics cards already providing the software and hardware to support the technology. The decision to move to 4K or HDR is primarily due to the availability of 4K displays on the market, where HDR sees almost none. This leaves gamers in a difficult decision of having to wait for HDR displays and 4K HDR displays to arrive, with the latter being more expensive as it incorporates both technologies.

What Is HDR And What Does It Do?

HDR (high dynamic range) allows for a wider range of colours and a greater level of contrast, meaning the levels of brightness are higher and the lowest levels of black are far more deeper. This is the Dynamic Range – the largest and smallest measurements on each end of the scale. In order to view HDR images and video, a HDR compatible display is required. To put things in to perspective let's take a look at how SDR (standard dynamic range) compares to HDR.

An SDR display will produce an 8-bit colour range measured at 16.8 million colours, with 6 stops of dynamic range at a maximum brightness level measured at 400 nits. 16.8 million colours means each pixel can present 256 shades of red, green or blue.

An HDR display will produce a 10-bit colour range measured over 1.07 billion colours, up to 20 stops of dynamic range, with a maximum brightness level well over 1000 nits. 1.07 billion colours means each pixel can present over 1000 shades of red, green or blue.

Playground Games And Microsoft Studios' Forza Horizon 3. SDR.

Image Courtesy Of NeoGaf. http://www.neogaf.com/forum/showthread.php?t=1283285&page=8

HDR

What's the benefits of an increased colour range?

Given the additional tones of colour and the increased levels of brightness along with the deeper levels of black, the amount of details within a picture or video can be greatly increased and resolved within each individual pixel for an increased duration of time, allowing for finer details in brighter scenes, more clarity in darker scenes and a more accurate representation of the quantity of colours that reside within the image.

An Image Comparison Of SDR Vs HDR. Image has been adjusted In Order To Demonstrate Differences In Colour On A Non-HDR Display. A HDR Compatible Display Is Required In Order To View Authentic HDR

What Is 4K And What Does It Do?

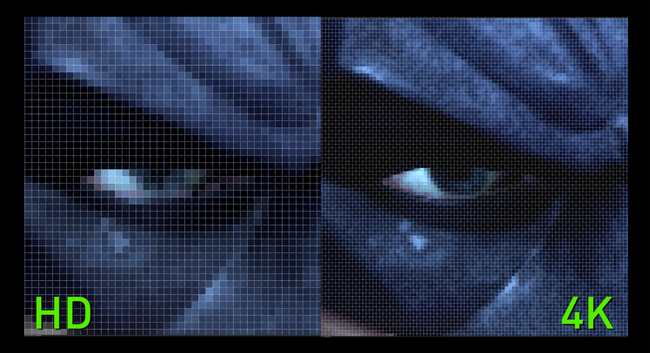

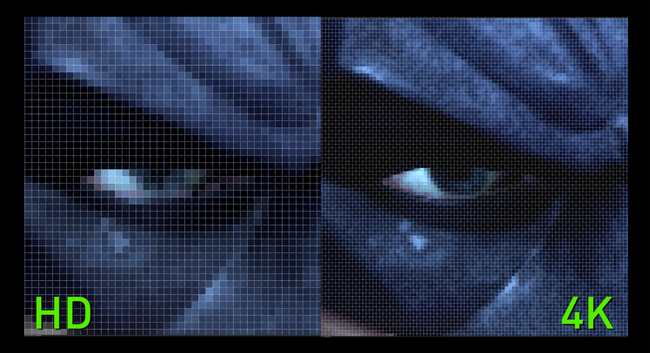

Most modern TVs contain a maximum resolution of 1920 X 1080p (HD). This is the number of pixels within the display on a horizontal and vertical alignment, that changes colours to present an image. Making the move to 4K, this will see TVs with an increase in resolution that's four times the number of pixels within a HD display. This resolution increase measures to 3840 X 2160p.

WB Games Montréal's Batman: Arkham Origins. Zoomed In At 1080p And 4K Resolution

With in an increase in resolution, more details can be resolved from the image as it is provided with more pixels to divide its information across. This means images will be sharper and crisper, and the amount of colours that's available within the dynamic range will be far more realised and clearer for the viewer to see. Within games this will increase the quality of textures, lighting and shadows. This also allows for less blur in fast moving scenes, especially when it's combined with a display featuring low response times.

How Much Does Resolution Improve The Gaming Experience?

Naturally, displays have always seen an increase in the number of pixels they contain. This will continue to happen as the benefits are clear. The human eye doesn't see things in pixels as they do not consist of digital technology interpreting visual information. They take in the visual information that's available to them with no pixels having to divide them in to a set number of pixels for clarity.

While an increase in resolution is good, what's so good about these extra pixels if the range of colour remains the same? It's essentially the same amount of colours, just more of them attempting to realise additional information without displaying more colours on the screen. For instance, a game which attempts to deliver a realistic aesthetic. Those such as Ryse: Son of Rome, Rise of the Tomb Raider, Battlefield 1, Assassins Creed: Unity and Quantum Break all demonstrate this.

They employ visual techniques often utilised in film in order to convey an image that's highly detailed, emphasises lighting and softer is as a whole, moving away from the traditional rendering techniques used in video games – in order to eliminate harsh textures and sharp geometry. While the PC versions of these games see an increase in quality over their console variants – due to the increase in resolution and graphical details, neither of these games look terrible, nor do they look more "gamey" as a result of their resolution and detail reduction when being played on a console.

Crytek's Ryse: Son of Rome

Masters in rendering technology and aiming to push the boundaries since their first well-known title – Remedy Games have always strived for a realistic aesthetic, one that prioritises pixel detail and techniques used in film – as opposed to increasing the resolution with no quality to back it up. When Remedy Games released their Psychological-Thriller title on the Xbox 360 – Alan Wake, they made the decision to focus on the technique in which the game renders detail and lighting, rather than following the route of a clearer image because of an increase in pixels.

Displaying the game at a resolution of 960 X 540p seems rather low, especially when you consider that the vast majority of developers where targeting 1280 X 720p and 1920 X 1080p for the Xbox 360. Due to the rendering techniques that the studio employed, which largely emphasised lighting, bloom effects, and enhancing the detail within the many dark and foggy areas within the game's world, Alan Wake proved to be visually stunning – ahead of most games released at the current time. Later down the line the game was released on the PC.

Remedy Games' Alan Wake

With this decision one would expect the increase in resolution to deliver superior results and a far more detailed game, exceeding the original. Increasing this resolution on the PC while keeping the detail and texture levels to that of the original game didn't produce a better looking game. Only when enabling the higher levels of graphical details and textures did the game look better. The reason for this is simple. Increasing resolution alone without the necessary visual assets to back it up will only result in a crisper and sharper of image of the already available assets.

As the original game incorporated filmic techniques in order to strike a balance between texture, detail, lighting, resolution and the like – the image that was presented proved to be adequate at the low resolution. Many games since have taken the same visual styling as Remedy's Alan Wake, and titles such as Crytek's Ryse: Son of Rome and Remedy's own Quantum Break all standout as visual showpieces. Looking at all three games in question which all saw releases on both the Xbox platform and the PC, the improvement of these games from console to PC isn't as spectacular as one would expect.

Remedy Games' Quantum Break

All of these games deliver an image that's startlingly low in resolution on Microsoft's platforms. Neither one of these games look bad as a result even if they do present themselves as superior on the PC which allows for resolutions ranging all the way up to 4K. Reason being, they opt for a filmic aesthetic reminiscent of Alan Wake. With that being said, this brings us back to the question of HDR and 4K, and which of these two technologies could possibly deliver an improvement to gaming, given the aforementioned games gained minimal. Keeping to the three games in question, where would they seek the most benefit?

Extra pixels or a wide range of colour that can realise a greater amount of detail in both the darkest and brightest scenes of an image?

To put it another way – is the quantity of pixels more important than the quality of the pixels?

HDR For Exaggerated Realism

HDR for titles that attempt to emulate realism through their visuals do have their benefits. And as noted in the aforementioned titles, resolution alone can never be the deciding factor as how far visual details can be pushed. This is especially true when you consider the fact that a video game rendered at a high resolution doesn't look any more detailed, natural, authentic or "real" than a DVD quality movie.

When taking in to account that a video game is totally artificial within its characters, environments and settings, where as a movie is recorded footage of those which are real – which has then been digitally translated into a set number of colours which is then displayed through pixels, then the case stands to reason. But how about those which do not attempt realism?

Nintendo's Pikmin 3

Those more in line with Cartoon-like visuals, exaggerated realism, a visual quality akin to movies such as The Incredibles, Ratatouille, or Monsters, Inc. Games such as Crash Bandicoot, Jak and Daxter, Pikmin, Street Fighter, and Donkey Kong do without a doubt gain a visual benefit from rendering at a higher resolution. But since these games place a larger emphasis on colour and the amount of detail they contain which in itself isn't all too deep, but is more dependant on the lighting, geometry depth and as stated previously – colour, these games seek greater benefit from an expanded colour palette.

Disney and Pixar Studios' The Incredibles Movie

In terms of where HDR and 4K find more benefits, there's no doubting that games will find advantages in both. However, with all that said, it would seem that higher resolutions hold more benefits in the clarity and sharpness of movie viewing, regardless if the source material is capturing the real world or CGI. – Games are greatly dependant on an artificial level of detail in order to make the most of their rendering resolution. That detail level is currently limited in geometry, lighting and general methods for rendering, as well as the range of available colours due to SDR standards.

So long as games continue to adopt higher resolutions as opposed to prioritising they technique in how the game is rendered and the amount of available colours which in turn provide higher levels of detail, they will always appear dated in the years that follow. This is especially true for games which focus on a realistic quality that depend on resolution to present their aesthetics. This is also why games such as Mario Kart 8, Prince of Persia (2008), Transistor, Ori and the Blind Forest, and Okami hold up far better in the modern day and the years to come, since they're visual style comes from the inspiration of Disney Pixar and cartoons. While these few number of games do look better at higher resolutions they also look great without, gaining more improvement from HDR along with those emulating realism.

What Are The Requirements For HDR And 4K Gaming?

While the Xbox One S may have HDR and 4K upscaling capabilities, there are some caveats to the methods implemented. HDR requires no additional processing power in order to make use of. The graphics chip of the console or PC simply needs to have the functionality implemented from a hardware standpoint. It can be implemented through software modifications but it will be severely limited. The necessary display connections for HDR are DisplayPort 1.4 or HDMI 2.0a and a display capable of displaying the HDR media. The HDR technology must also be built in to the game in order to display it.

4K upscaling is also a function that requires no additional processing power. So long as the aspect ratio of the screen remains the same to the resolution it's displaying, either the display itself, the console or the PC will scale the pixels to fit the display. 4K or 3840 X 2160p is the four times the identical amount of pixels of HD, or 1920 X 1080P. This means the scaling will be precise when attempting to output 4K.

Ryse: Son of Rome Upscaling Comparison. Finer Details Resolve On The Native 1080p Image, But The Image Sees No Significant Improvement Due To The Game's Rendering Technique. The 1600 X 900p Image Upscale Isn't Common Due To The Increase Being Only Around 30%, As Well As The Lack Of Displays Adopting The Resolution. Image courtesy of WCCFTech

As 4K upscaling is simply a technique of extrapolating extra pixels which do not exist on a HD display, results will not always be satisfying. Most upscaling techniques work by stretching or blurring the colours of each pixel over a larger number of pixels in order to fill the display, which are then applied with a sharpening filter to clean up the image. Others work by having the device or system attempt to calculate which colours around each pixel should be displayed then filling the surrounding areas so that it can scale to the display. Some methods work better than others but none compare to a native 4K display with 3840 X 2160 true pixels.

Because of the amount of pixels is greater than that of HD, additional processing power is required to display it. In the case that a user wishes to run the game at 4K resolution while keeping the same frame rate the same which it was in HD, the required processing power in order to render the game will be four times the same if not more.

Rise Of The Tomb Raider. 4K Image Resized For The Purposes Of Viewing.

While a 4K capable display is required to render the game in native 4K there are solutions that will allow users to gain an improvement in game resolution while keeping to a standard HD display. For owners of Nvidia and AMD graphics card, the software and driver control panels for each respective cards provide rendering options for higher resolutions being displayed on a HD display. Nvidia uses a technology called DSR – also known as dynamic super resolution, and AMD uses a technology called VSR – also known as virtual super resolution.

These technologies allow the display to render the internal image of a game at a higher resolution than what the display is able to provide, which it then downsamples to fit the display. This works by applying an anti-aliasing solution to the image through the form of super-sampling then shrinking the image back down to the aspect ratio of the display. While there are drastic visual improvements to this solution, those which provide a larger benefit over upscaling, it doesn't look as supreme as a native 4K resolution and it require almost as much processing power as rendering the image in native 4K.

HDR and 4K are both significant leaps for display technology and while the latter has existed for quite a number of years in PC gaming, it hasn't been widely adopted due to the extreme amount of the performance required by graphics cards, most of which have always been on the high-end as opposed to be relatively affordable by the majority of most gamers, as well as the lack of 4K capable displays in the few years prior.

HD displays have become long-in-the-tooth, modern day graphics cards even on the low-end are more than capable of displaying games at the resolution, at 60 frames-per-second and at detail levels that far eclipse those in game consoles. Video games have always dictated and pioneered display technology and 3D rendering. With TV manufacturers refusing to acknowledge the audience, producing displays which cater to slow moving scenes focusing on image quality, as opposed to image performance which demands low input times and fast refresh rates, gaming monitors have become the number one choice for gamers.

HDR may not be limited on gaming monitors at this current time, but for gamers who are tolerable to the specifications offered by a TV, they do have larger availability should they choose to use them. Moving to 4K and HDR will see benefits, but should the selection for display availability be one or the other, with gaming monitors that integrate both seeing substantially higher prices, which technology will you decide to adopt?

In this article I will provide an explanation as to what HDR is, how it works, whether or not it's an important aspect to gaming and if it's a superior visual improvement than going to 4K. Think of this article as a shortcut explanation that does away with the unneeded and vastly detailed information. While the technology is largely limited to TVs as opposed to gaming monitors which are largely preferred due to the high response times and choices for panel technology, HDR will be arriving on PC displays – with graphics cards already providing the software and hardware to support the technology. The decision to move to 4K or HDR is primarily due to the availability of 4K displays on the market, where HDR sees almost none. This leaves gamers in a difficult decision of having to wait for HDR displays and 4K HDR displays to arrive, with the latter being more expensive as it incorporates both technologies.

What Is HDR And What Does It Do?

HDR (high dynamic range) allows for a wider range of colours and a greater level of contrast, meaning the levels of brightness are higher and the lowest levels of black are far more deeper. This is the Dynamic Range – the largest and smallest measurements on each end of the scale. In order to view HDR images and video, a HDR compatible display is required. To put things in to perspective let's take a look at how SDR (standard dynamic range) compares to HDR.

An SDR display will produce an 8-bit colour range measured at 16.8 million colours, with 6 stops of dynamic range at a maximum brightness level measured at 400 nits. 16.8 million colours means each pixel can present 256 shades of red, green or blue.

An HDR display will produce a 10-bit colour range measured over 1.07 billion colours, up to 20 stops of dynamic range, with a maximum brightness level well over 1000 nits. 1.07 billion colours means each pixel can present over 1000 shades of red, green or blue.

Playground Games And Microsoft Studios' Forza Horizon 3. SDR.

Image Courtesy Of NeoGaf. http://www.neogaf.com/forum/showthread.php?t=1283285&page=8

HDR

What's the benefits of an increased colour range?

Given the additional tones of colour and the increased levels of brightness along with the deeper levels of black, the amount of details within a picture or video can be greatly increased and resolved within each individual pixel for an increased duration of time, allowing for finer details in brighter scenes, more clarity in darker scenes and a more accurate representation of the quantity of colours that reside within the image.

An Image Comparison Of SDR Vs HDR. Image has been adjusted In Order To Demonstrate Differences In Colour On A Non-HDR Display. A HDR Compatible Display Is Required In Order To View Authentic HDR

What Is 4K And What Does It Do?

Most modern TVs contain a maximum resolution of 1920 X 1080p (HD). This is the number of pixels within the display on a horizontal and vertical alignment, that changes colours to present an image. Making the move to 4K, this will see TVs with an increase in resolution that's four times the number of pixels within a HD display. This resolution increase measures to 3840 X 2160p.

WB Games Montréal's Batman: Arkham Origins. Zoomed In At 1080p And 4K Resolution

With in an increase in resolution, more details can be resolved from the image as it is provided with more pixels to divide its information across. This means images will be sharper and crisper, and the amount of colours that's available within the dynamic range will be far more realised and clearer for the viewer to see. Within games this will increase the quality of textures, lighting and shadows. This also allows for less blur in fast moving scenes, especially when it's combined with a display featuring low response times.

How Much Does Resolution Improve The Gaming Experience?

Naturally, displays have always seen an increase in the number of pixels they contain. This will continue to happen as the benefits are clear. The human eye doesn't see things in pixels as they do not consist of digital technology interpreting visual information. They take in the visual information that's available to them with no pixels having to divide them in to a set number of pixels for clarity.

While an increase in resolution is good, what's so good about these extra pixels if the range of colour remains the same? It's essentially the same amount of colours, just more of them attempting to realise additional information without displaying more colours on the screen. For instance, a game which attempts to deliver a realistic aesthetic. Those such as Ryse: Son of Rome, Rise of the Tomb Raider, Battlefield 1, Assassins Creed: Unity and Quantum Break all demonstrate this.

They employ visual techniques often utilised in film in order to convey an image that's highly detailed, emphasises lighting and softer is as a whole, moving away from the traditional rendering techniques used in video games – in order to eliminate harsh textures and sharp geometry. While the PC versions of these games see an increase in quality over their console variants – due to the increase in resolution and graphical details, neither of these games look terrible, nor do they look more "gamey" as a result of their resolution and detail reduction when being played on a console.

Crytek's Ryse: Son of Rome

Masters in rendering technology and aiming to push the boundaries since their first well-known title – Remedy Games have always strived for a realistic aesthetic, one that prioritises pixel detail and techniques used in film – as opposed to increasing the resolution with no quality to back it up. When Remedy Games released their Psychological-Thriller title on the Xbox 360 – Alan Wake, they made the decision to focus on the technique in which the game renders detail and lighting, rather than following the route of a clearer image because of an increase in pixels.

Displaying the game at a resolution of 960 X 540p seems rather low, especially when you consider that the vast majority of developers where targeting 1280 X 720p and 1920 X 1080p for the Xbox 360. Due to the rendering techniques that the studio employed, which largely emphasised lighting, bloom effects, and enhancing the detail within the many dark and foggy areas within the game's world, Alan Wake proved to be visually stunning – ahead of most games released at the current time. Later down the line the game was released on the PC.

Remedy Games' Alan Wake

With this decision one would expect the increase in resolution to deliver superior results and a far more detailed game, exceeding the original. Increasing this resolution on the PC while keeping the detail and texture levels to that of the original game didn't produce a better looking game. Only when enabling the higher levels of graphical details and textures did the game look better. The reason for this is simple. Increasing resolution alone without the necessary visual assets to back it up will only result in a crisper and sharper of image of the already available assets.

As the original game incorporated filmic techniques in order to strike a balance between texture, detail, lighting, resolution and the like – the image that was presented proved to be adequate at the low resolution. Many games since have taken the same visual styling as Remedy's Alan Wake, and titles such as Crytek's Ryse: Son of Rome and Remedy's own Quantum Break all standout as visual showpieces. Looking at all three games in question which all saw releases on both the Xbox platform and the PC, the improvement of these games from console to PC isn't as spectacular as one would expect.

Remedy Games' Quantum Break

All of these games deliver an image that's startlingly low in resolution on Microsoft's platforms. Neither one of these games look bad as a result even if they do present themselves as superior on the PC which allows for resolutions ranging all the way up to 4K. Reason being, they opt for a filmic aesthetic reminiscent of Alan Wake. With that being said, this brings us back to the question of HDR and 4K, and which of these two technologies could possibly deliver an improvement to gaming, given the aforementioned games gained minimal. Keeping to the three games in question, where would they seek the most benefit?

Extra pixels or a wide range of colour that can realise a greater amount of detail in both the darkest and brightest scenes of an image?

To put it another way – is the quantity of pixels more important than the quality of the pixels?

HDR For Exaggerated Realism

HDR for titles that attempt to emulate realism through their visuals do have their benefits. And as noted in the aforementioned titles, resolution alone can never be the deciding factor as how far visual details can be pushed. This is especially true when you consider the fact that a video game rendered at a high resolution doesn't look any more detailed, natural, authentic or "real" than a DVD quality movie.

When taking in to account that a video game is totally artificial within its characters, environments and settings, where as a movie is recorded footage of those which are real – which has then been digitally translated into a set number of colours which is then displayed through pixels, then the case stands to reason. But how about those which do not attempt realism?

Nintendo's Pikmin 3

Those more in line with Cartoon-like visuals, exaggerated realism, a visual quality akin to movies such as The Incredibles, Ratatouille, or Monsters, Inc. Games such as Crash Bandicoot, Jak and Daxter, Pikmin, Street Fighter, and Donkey Kong do without a doubt gain a visual benefit from rendering at a higher resolution. But since these games place a larger emphasis on colour and the amount of detail they contain which in itself isn't all too deep, but is more dependant on the lighting, geometry depth and as stated previously – colour, these games seek greater benefit from an expanded colour palette.

Disney and Pixar Studios' The Incredibles Movie

In terms of where HDR and 4K find more benefits, there's no doubting that games will find advantages in both. However, with all that said, it would seem that higher resolutions hold more benefits in the clarity and sharpness of movie viewing, regardless if the source material is capturing the real world or CGI. – Games are greatly dependant on an artificial level of detail in order to make the most of their rendering resolution. That detail level is currently limited in geometry, lighting and general methods for rendering, as well as the range of available colours due to SDR standards.

So long as games continue to adopt higher resolutions as opposed to prioritising they technique in how the game is rendered and the amount of available colours which in turn provide higher levels of detail, they will always appear dated in the years that follow. This is especially true for games which focus on a realistic quality that depend on resolution to present their aesthetics. This is also why games such as Mario Kart 8, Prince of Persia (2008), Transistor, Ori and the Blind Forest, and Okami hold up far better in the modern day and the years to come, since they're visual style comes from the inspiration of Disney Pixar and cartoons. While these few number of games do look better at higher resolutions they also look great without, gaining more improvement from HDR along with those emulating realism.

What Are The Requirements For HDR And 4K Gaming?

While the Xbox One S may have HDR and 4K upscaling capabilities, there are some caveats to the methods implemented. HDR requires no additional processing power in order to make use of. The graphics chip of the console or PC simply needs to have the functionality implemented from a hardware standpoint. It can be implemented through software modifications but it will be severely limited. The necessary display connections for HDR are DisplayPort 1.4 or HDMI 2.0a and a display capable of displaying the HDR media. The HDR technology must also be built in to the game in order to display it.

4K upscaling is also a function that requires no additional processing power. So long as the aspect ratio of the screen remains the same to the resolution it's displaying, either the display itself, the console or the PC will scale the pixels to fit the display. 4K or 3840 X 2160p is the four times the identical amount of pixels of HD, or 1920 X 1080P. This means the scaling will be precise when attempting to output 4K.

Ryse: Son of Rome Upscaling Comparison. Finer Details Resolve On The Native 1080p Image, But The Image Sees No Significant Improvement Due To The Game's Rendering Technique. The 1600 X 900p Image Upscale Isn't Common Due To The Increase Being Only Around 30%, As Well As The Lack Of Displays Adopting The Resolution. Image courtesy of WCCFTech

As 4K upscaling is simply a technique of extrapolating extra pixels which do not exist on a HD display, results will not always be satisfying. Most upscaling techniques work by stretching or blurring the colours of each pixel over a larger number of pixels in order to fill the display, which are then applied with a sharpening filter to clean up the image. Others work by having the device or system attempt to calculate which colours around each pixel should be displayed then filling the surrounding areas so that it can scale to the display. Some methods work better than others but none compare to a native 4K display with 3840 X 2160 true pixels.

Because of the amount of pixels is greater than that of HD, additional processing power is required to display it. In the case that a user wishes to run the game at 4K resolution while keeping the same frame rate the same which it was in HD, the required processing power in order to render the game will be four times the same if not more.

Rise Of The Tomb Raider. 4K Image Resized For The Purposes Of Viewing.

While a 4K capable display is required to render the game in native 4K there are solutions that will allow users to gain an improvement in game resolution while keeping to a standard HD display. For owners of Nvidia and AMD graphics card, the software and driver control panels for each respective cards provide rendering options for higher resolutions being displayed on a HD display. Nvidia uses a technology called DSR – also known as dynamic super resolution, and AMD uses a technology called VSR – also known as virtual super resolution.

These technologies allow the display to render the internal image of a game at a higher resolution than what the display is able to provide, which it then downsamples to fit the display. This works by applying an anti-aliasing solution to the image through the form of super-sampling then shrinking the image back down to the aspect ratio of the display. While there are drastic visual improvements to this solution, those which provide a larger benefit over upscaling, it doesn't look as supreme as a native 4K resolution and it require almost as much processing power as rendering the image in native 4K.

HDR and 4K are both significant leaps for display technology and while the latter has existed for quite a number of years in PC gaming, it hasn't been widely adopted due to the extreme amount of the performance required by graphics cards, most of which have always been on the high-end as opposed to be relatively affordable by the majority of most gamers, as well as the lack of 4K capable displays in the few years prior.

HD displays have become long-in-the-tooth, modern day graphics cards even on the low-end are more than capable of displaying games at the resolution, at 60 frames-per-second and at detail levels that far eclipse those in game consoles. Video games have always dictated and pioneered display technology and 3D rendering. With TV manufacturers refusing to acknowledge the audience, producing displays which cater to slow moving scenes focusing on image quality, as opposed to image performance which demands low input times and fast refresh rates, gaming monitors have become the number one choice for gamers.

HDR may not be limited on gaming monitors at this current time, but for gamers who are tolerable to the specifications offered by a TV, they do have larger availability should they choose to use them. Moving to 4K and HDR will see benefits, but should the selection for display availability be one or the other, with gaming monitors that integrate both seeing substantially higher prices, which technology will you decide to adopt?

Attachments

Last edited: