Man of Honour

-

Competitor rules

Please remember that any mention of competitors, hinting at competitors or offering to provide details of competitors will result in an account suspension. The full rules can be found under the 'Terms and Rules' link in the bottom right corner of your screen. Just don't mention competitors in any way, shape or form and you'll be OK.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

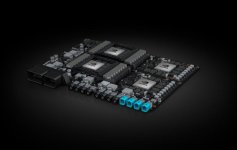

The NVidia GV100 News Thread

- Thread starter Kaapstad

- Start date

More options

Thread starter's postsLatest News.

https://www.techpowerup.com/238758/nvidia-announces-saturnv-ai-supercomputer-powered-by-volta

https://www.techpowerup.com/238716/...-successor-codenamed-ampere-expected-gtc-2018

https://www.techpowerup.com/237730/25-companies-developing-level-5-robotaxis-on-nvidia-cuda-gpus

https://blogs.nvidia.com/blog/2017/07/22/tesla-v100-cvpr-nvail/

http://www.fudzilla.com/news/43628-nvidia-announces-dgx-station-with-four-volta-v100s

http://www.fudzilla.com/news/43627-nvidia-announces-dgx-1-powered-by-volta

http://www.fudzilla.com/news/graphics/43625-nvidia-announces-tesla-v100-volta

https://www.techpowerup.com/238758/nvidia-announces-saturnv-ai-supercomputer-powered-by-volta

https://www.techpowerup.com/238716/...-successor-codenamed-ampere-expected-gtc-2018

https://www.techpowerup.com/237730/25-companies-developing-level-5-robotaxis-on-nvidia-cuda-gpus

https://blogs.nvidia.com/blog/2017/07/22/tesla-v100-cvpr-nvail/

http://www.fudzilla.com/news/43628-nvidia-announces-dgx-station-with-four-volta-v100s

http://www.fudzilla.com/news/43627-nvidia-announces-dgx-1-powered-by-volta

http://www.fudzilla.com/news/graphics/43625-nvidia-announces-tesla-v100-volta

Last edited:

Last edited:

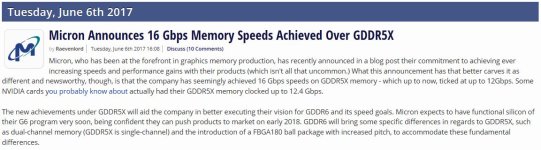

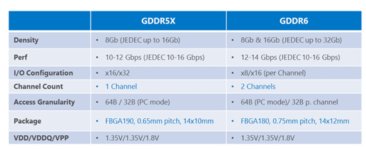

Future Memory Developments.

Last edited:

Reserved 6

Reserved 7

Reserved 8

Reserved 9

I thoughtI would start a news thread for big Volta as it could get quite interesting if I regularly keep the OPs updated.

If people would like to discuss big Volta and post items that could be used in the OP that would be very useful.

If people would like to discuss big Volta and post items that could be used in the OP that would be very useful.

Associate

- Joined

- 22 Jun 2015

- Posts

- 137

Well, so much for the GTX 2080 rumours...

Soldato

Holy Crap.

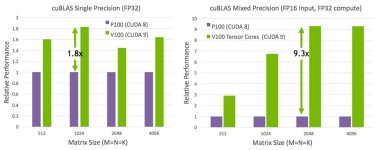

Tensor cores for mat-mat multiplication? 120TF/s throughput?! If it's practical to extract even a small fraction of this potential, then there are going to be a lot of people in the scientific computing field buying a V100.

I'm working on dense linear algebra right now, so I'm going to need to get my hands on one ASAP. Just need to figure out where best to go begging for the funds

I'm sure these won't make it to the G-Force range (minimal utility for gaming), but for a lot of HPC applications this could be a game-changer.

Tensor cores for mat-mat multiplication? 120TF/s throughput?! If it's practical to extract even a small fraction of this potential, then there are going to be a lot of people in the scientific computing field buying a V100.

I'm working on dense linear algebra right now, so I'm going to need to get my hands on one ASAP. Just need to figure out where best to go begging for the funds

I'm sure these won't make it to the G-Force range (minimal utility for gaming), but for a lot of HPC applications this could be a game-changer.

Soldato

Wake me up when there's some GeForce news.

Holy Crap.

Tensor cores for mat-mat multiplication? 120TF/s throughput?! If it's practical to extract even a small fraction of this potential, then there are going to be a lot of people in the scientific computing field buying a V100.

I'm working on dense linear algebra right now, so I'm going to need to get my hands on one ASAP. Just need to figure out where best to go begging for the funds

I'm sure these won't make it to the G-Force range (minimal utility for gaming), but for a lot of HPC applications this could be a game-changer.

Good luck with raising $3Bn

gonna be a few months before they are generally available to industry (and at normal industry prices) unless you have deep pockets.

gonna be a few months before they are generally available to industry (and at normal industry prices) unless you have deep pockets.Soldato

Good luck with raising $3Bngonna be a few months before they are generally available to industry (and at normal industry prices) unless you have deep pockets.

Probably. But it depends

We had access to the new Xeon Phi (Knight's Landing) a good 9 months before they were generally available. Big boss man here has pretty close ties with Intel, as some of the work we do is related to optimising the Intel Math Kernal Library. Not sure if he can get anything from Nvidia, but I've seen stranger things happen.

More realistically, in academia, you mentally add on about a 9 to 18 month delay between trying to get some funding, and actually having it to spend. So if I start searching now, I *might* be able to buy one before it's superseded by V110

Tensor cores for mat-mat multiplication? 120TF/s throughput?!

Would you care to explain this for numpties like me?

For particular types of matrix multiplication and accumulation that can leverage mixed precision floating point, the GV100 is stupidly fast. 10x faster than pascal. The main application will be in deep learning, the GV100 is basically accelerating neural network updates and propagation. This is why Nvidia have specially supported Google's Tensor Flow library.Would you care to explain this for numpties like me?

There is a reasoning companies will be paying $18K per single chip for this beast.

Permabanned

I'm sure these won't make it to the G-Force range (minimal utility for gaming), but for a lot of HPC applications this could be a game-changer.

That's why I can't understand why all the Gamers were getting excited about it, We're never going to see a GTX Gaming card with anything close to this, Are we?

That's why I can't understand why all the Gamers were getting excited about it, We're never going to see a GTX Gaming card with anything close to this, Are we?

No but the tech advancement will likely bring other advantages with it that can be applied to GeForce and likewise the potential stats when stripping out the other stuff gives room for a lot of potential.