Ok well news seems to be popping up places so thought it best start a thread about what will be one of the most important gaming boards for some time.

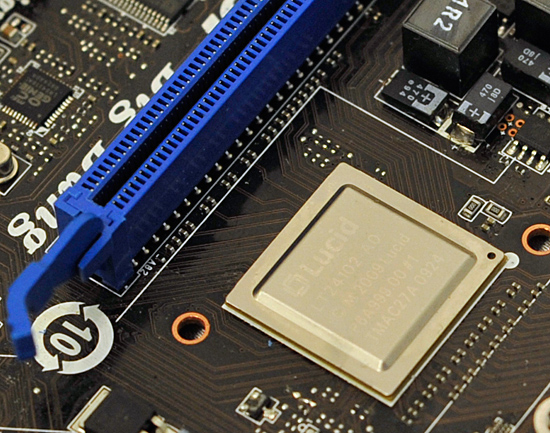

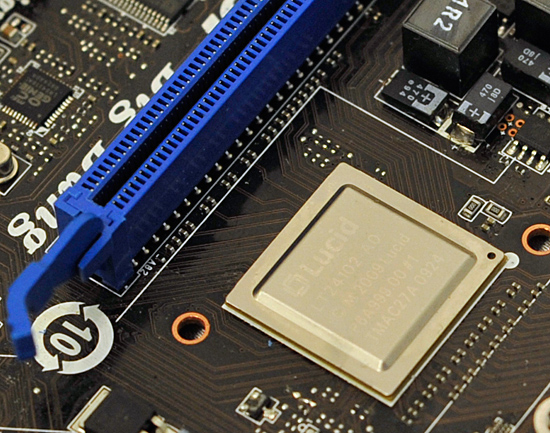

What is Lucid Hydra 200?

Its a chip created by LucidLogix which allows the harmonious use of ATI and NVIDIA technologies in a single PC. Yes SLI and Crossfire. CrossSLI or SLIfire

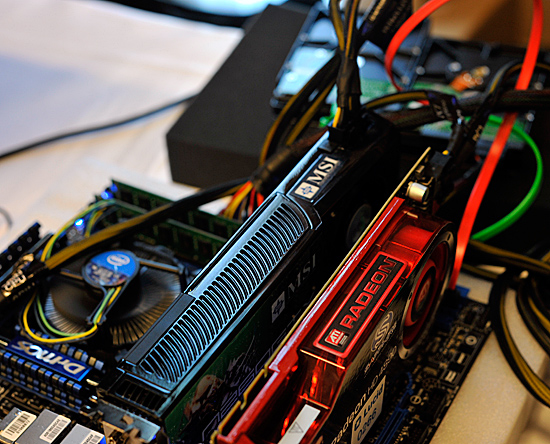

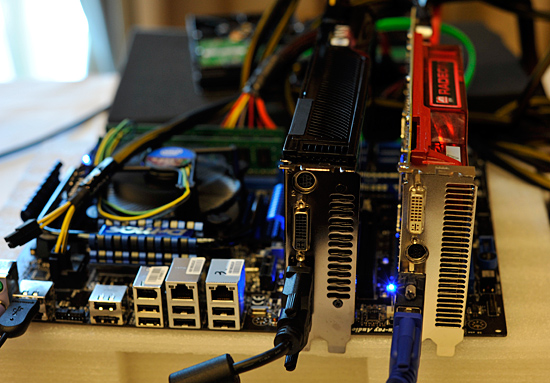

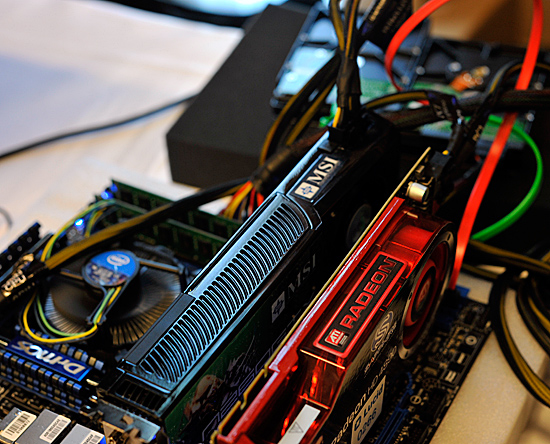

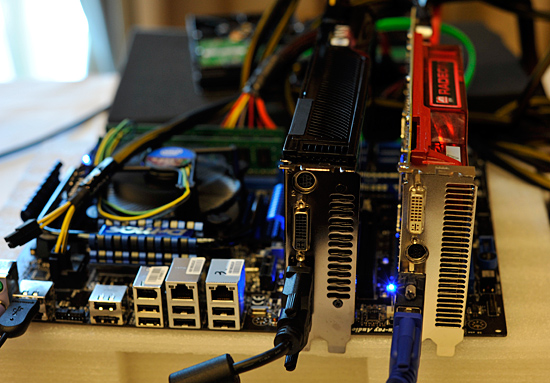

Is there not more?

This the partnership between MSI and Lucid technology also allows miss-matched speed cards to be used together. I.e. have an old 9800GT in your cupboard from when you got your 285 GTX? Slap it in and get the extra juice. Not only that, if its a 9800 Pro (ATI) that would work too!

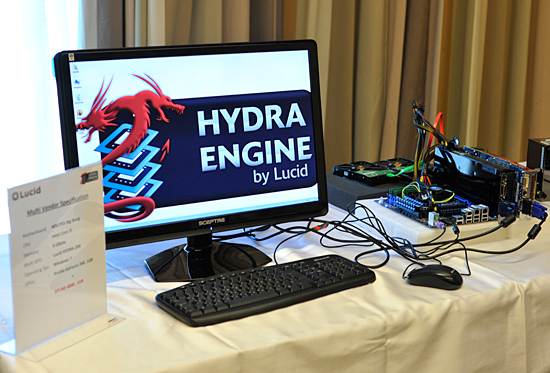

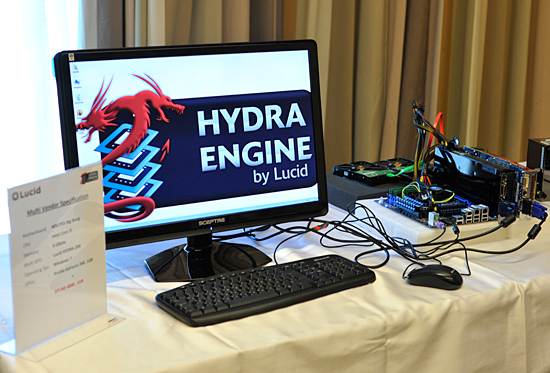

What is MSI Big Bang?

This is the first motherboard to use this technology, allowing gamers everywhere to have more flexibility on the hardware setup they use in systems

This can't be true? Show me images I need proof?

Taken from Anantech article:

What is Lucid Hydra 200?

Its a chip created by LucidLogix which allows the harmonious use of ATI and NVIDIA technologies in a single PC. Yes SLI and Crossfire. CrossSLI or SLIfire

Is there not more?

This the partnership between MSI and Lucid technology also allows miss-matched speed cards to be used together. I.e. have an old 9800GT in your cupboard from when you got your 285 GTX? Slap it in and get the extra juice. Not only that, if its a 9800 Pro (ATI) that would work too!

What is MSI Big Bang?

This is the first motherboard to use this technology, allowing gamers everywhere to have more flexibility on the hardware setup they use in systems

This can't be true? Show me images I need proof?

Taken from Anantech article:

, too bad nvidia kills physx and cuba if ATI card is about

, too bad nvidia kills physx and cuba if ATI card is about

)

)