Owners

1. Smogsy - 2x Gigabyte 980 Reference - Confirmation

2. ratters - 2x Galaxy 980 Reference - Confirmation

3. whyscotty - 2x EVGA 980 SC - Confirmation

4. MjFrosty -3x 980 EVGA Reference - Confirmation

5. JBOD - 1x 980 EVGA Reference - Link Soon

6 .besty - 1x 980 EVGA SC Reference & 1 980 MSI Gaming - Link Soon

7. a.tunnard 1x 980 SC Reference - Confirmation

8. ScottiB - 2x EVGA SC Reference - Confirmation

9. Infamousmax - 1x EVGA Refeference - Confirmation

10. Stoney - 3x 980 GALAXY - Confirmation

11. Mr Fujisawa Gigabyte 980 - Confirmation

12. Boomstick EVGA 980 SC Confirmation

13. Dangel 2x 980 Confirmation

14. Khemist - Zotac 980 Reference Confirmation

15. Gerardfraser - 980 Reference? Confirmation

16. Datblink - 2x 980 EVGA Confirmation

17. rankftw 1x 980 Confirmation link soon?

18. JaseUK 980 EVGA Confirmation link soon?

19. SS-89 980 EVGA SC Confirmation

20 NeoRed 2x 980 ASUS Confirmation

21. BlitzKrieg - 2x 980 EVGA SC - Confirmation

22. tipes 1x 980 EVGA SC - Confirmation

23. LowerRider007 - 1x 980 - Confirmation

24. Huggie86 1x 980 MSI Gaming - Confirmation

25. Evil Shubunkin - 2x 980 EVGA SC - Confirmation

26. JABenedicic - MSI Gaming 980 - Confirmation

Tech Slides

Reviews

AnAndtech - http://www.anandtech.com/show/8526/nvidia-geforce-gtx-980-review

TechPowerUP - http://www.techpowerup.com/reviews/NVIDIA/GeForce_GTX_980/

GURU3D - http://www.guru3d.com/articles-pages/gigabyte-geforce-gtx-980-g1-gaming-review,1.html

Bit-Tech - http://www.bit-tech.net/hardware/graphics/2014/09/19/nvidia-geforce-gtx-980-review/1

Hexus - http://hexus.net/tech/reviews/graphics/74849-nvidia-geforce-gtx-980-28nm-maxwell/

Tech Radar - http://www.techradar.com/reviews/pc...s-cards/nvidia-geforce-gtx-980-1266202/review

HardwareCanucks - http://www.hardwarecanucks.com/foru...vidia-geforce-gtx-980-performance-review.html

Hardware Heaven - http://www.hardwareheaven.com/2014/09/geforce-gtx-980-review/

PcPerspective - http://www.pcper.com/reviews/Graphi...and-GTX-970-GM204-Review-Power-and-Efficiency

HardOCP - http://hardocp.com/article/2014/09/...eforce_gtx_980_video_card_review#.VBwvohafpAY

TechPowerUP - http://www.techpowerup.com/reviews/NVIDIA/GeForce_GTX_980/

GURU3D - http://www.guru3d.com/articles-pages/gigabyte-geforce-gtx-980-g1-gaming-review,1.html

Bit-Tech - http://www.bit-tech.net/hardware/graphics/2014/09/19/nvidia-geforce-gtx-980-review/1

Hexus - http://hexus.net/tech/reviews/graphics/74849-nvidia-geforce-gtx-980-28nm-maxwell/

Tech Radar - http://www.techradar.com/reviews/pc...s-cards/nvidia-geforce-gtx-980-1266202/review

HardwareCanucks - http://www.hardwarecanucks.com/foru...vidia-geforce-gtx-980-performance-review.html

Hardware Heaven - http://www.hardwareheaven.com/2014/09/geforce-gtx-980-review/

PcPerspective - http://www.pcper.com/reviews/Graphi...and-GTX-970-GM204-Review-Power-and-Efficiency

HardOCP - http://hardocp.com/article/2014/09/...eforce_gtx_980_video_card_review#.VBwvohafpAY

Benchmark Threads

Other Threads

Useful Information

Direct X 11 & 12 http://www.anandtech.com/show/8544/microsoft-details-direct3d-113-12-new-features

NVIDIA

Overclockers NVIDIA Range can be found here

g-sync

physx

what is nvidia physx technology?

nvidia® physx® is a powerful physics engine enabling real-time physics in leading edge pc games.

physx software is widely adopted by over 150 games and is used by more than 10,000 developers. physx is optimized for hardware acceleration by massively parallel processors.

geforce gpus with physx provide an exponential increase in physics processing power taking gaming physics to the next level.

what is physics for gaming and why is it important?

physics is the next big thing in gaming. it's all about how objects in your game move, interact, and react to the environment around them. without physics in many of today's games, objects just don't seem to act the way you'd want or expect them to in real life. currently, most of the action is limited to pre-scripted or ‘canned' animations triggered by in-game events like a gunshot striking a wall. even the most powerful weapons can leave little more than a smudge on the thinnest of walls; and every opponent you take out, falls in the same pre-determined fashion.

players are left with a game that looks fine, but is missing the sense of realism necessary to make the experience truly immersive. with nvidia physx technology, game worlds literally come to life: walls can be torn down, glass can be shattered, trees bend in the wind, and water flows with body and force.

nvidia geforce gpus with physx deliver the computing horsepower necessary to enable true, advanced physics in the next generation of game titles making canned animation effects a thing of the past.

which nvidia geforce gpus support physx?

the minimum requirement to support gpu-accelerated physx is a geforce 8-series or later gpu with a minimum of 32 cores and a minimum of 256mb dedicated graphics memory. however, each physx application has its own gpu and memory recommendations. in general, 512mb of graphics memory is recommended unless you have a gpu that is dedicated to physx.

how does physx work with sli and multi-gpu configurations?

when two, three, or four matched gpus are working in sli, physx runs on one gpu, while graphics rendering runs on all gpus. the nvidia drivers optimize the available resources across all gpus to balance physx computation and graphics rendering. therefore users can expect much higher frame rates and a better overall experience with sli.

a new configuration that’s now possible with physx is 2 non-matched (heterogeneous) gpus. in this configuration, one gpu renders graphics (typically the more powerful gpu) while the second gpu is completely dedicated to physx. by offloading physx to a dedicated gpu, users will experience smoother gaming.

why is a gpu good for physics processing?

the multithreaded physx engine was designed specifically for hardware acceleration in massively parallel environments.

gpus are the natural place to compute physics calculations because, like graphics, physics processing is driven by thousands of parallel computations.

today, nvidia's gpus, have as many as 480 cores, so they are well-suited to take advantage of physx software.

nvidia is committed to making the gaming experience exciting, dynamic, and vivid. the combination of graphics and physics impacts the way a virtual world looks and behaves.

can i use an nvidia gpu as a physx processor and a non-nvidia gpu for regular display graphics?

no. there are multiple technical connections between physx processing and graphics that require tight collaboration between the two technologies.

to deliver a good experience for users, nvidia physx technology has been fully verified and enabled using only nvidia gpus for graphics.

can i use an amd card?

physx supports both cpu and gpu simulation. gpu simulation is only available on nvidia graphics card irrespective of cpu.

well written application usually check for gpu simulation support and allow for cpu fallback if its not present.

however, cpu physx can seriously effect framerate

games that support physx

see link below:

http://physxinfo.com/index.php?p=gam&f=pc

videos:

sli

nvidia gameworks™

shadowplay

3d vision

NVIDIA SURROUND

CUDA

Nvidia Game Stream

PC GAMING MADE PORTABLE WITH NVIDIA GAMESTREAM™ TECHNOLOGY.

NVIDIA GameStream harnesses the power of the most advanced GeForce® GTX™ graphics cards or GRID cloud Beta and exclusive game-speed streaming technologies to bring low-latency PC gaming experience to your SHIELD portable.

With streaming support for over 100 of the hottest PC titles at up to 1080p and 60 frames per second, SHIELD offers a truly unique handheld gaming experience.

NEW: Remotely access your PC to play your games away from your home.*

Play your favorite PC games on your HDTV at up to 1080p at 60 FPS using a wireless Bluetooth controller with SHIELD Console Mode.

NEW: Stream games like World of Warcraft, League of Legends, and DOTA 2 to your HDTV using Wi-Fi and play with full Bluetooth keyboard and mouse support.

Download GeForce Experience to see if your PC is ready for.

How GAMESTREAM Works

NVIDIA uses the H.264 encoder built into GeForce GTX 650 or higher desktop GPUs and GeForce GTX Kepler and Maxwell notebooks, along with efficient wireless streaming software protocol integrated into GeForce Experience, to stream games from the PC to SHIELD over the user's home Wi-Fi network with ultra-low latency. Gamers then use SHIELD as the controller and display for their favorite PC games, as well as for Steam Big Picture.

In addition to streaming the game, NVIDIA also configures PC games for streaming using GeForce Experience to deliver a seamless out of the box experience. There are three key activities involved here:

Generating optimal game settings for streaming games from the PC to SHIELD

We use our GeForce Experience servers to determine the best quality settings based on the user's CPU and GPU, and target higher frame rates than 'normal' optimal settings to ensure the lowest latency gaming experience. These settings are automatically applied when the game is launched so gamers don't have to worry about configuring these settings themselves.

Enabling controller support in games

This avoids gamers having to manually configure controller support in the game – it 'just works' out of the box.

Optimizing the game launch process

This is important so that gamers can get into the game as quickly and seamlessly as possible, without hitting launchers or other 2 foot UI interactions that can be difficult to interface with a controller.

Steam Big Picture

Big Picture shows up as a selection in the list of SHIELD-optimized games. Launching it gives you access to your Steam games – both supported and unsupported – plus the Steam Store and all the Steam community features. Supported games are recommended for the best, optimized streaming experience – the latest list of supported games can be found on SHIELD.nvidia.com starting at launch.

Unsupported games may work if they have native controller support, but will not be optimized for streaming and may have other streaming compatibility issues.

System Requirements for PC Game Streaming:

> GPU:

- Desktop: GeForce GTX 650 or higher GPU, or

- Notebook (Beta): GeForce GTX 800M, GTX 700M and select Kepler-based GTX 600M GPUs

> CPU: Intel Core i3-2100 3.1GHz or AMD Athlon II X4 630 2.8 GHz or higher

> System Memory: 4 GB or higher

> Software: GeForce Experience™ application and latest GeForce drivers

> OS: Windows 8 or Windows 7

> Routers: 802.11a/g router (minimum). 802.11n dual band router (recommended). See list of GameStream ready routers.

GameStream Ready Games

http://shield.nvidia.com/pc-game-list/

Setup Guide

http://www.geforce.com/whats-new/guides/nvidia-shield-user-guide#3

Nvidia Workstation cards

NVIDIA

Overclockers NVIDIA Range can be found here

g-sync

what is g-sync

nvidia g-sync is ground breaking new display technology that delivers the smoothest and fastest gaming experience ever.

g-sync’s revolutionary performance is achieved by synchronizing display refresh rates to the gpu in your geforce gtx-powered pc, eliminating screen tearing and minimizing display stutter and input lag.

the result: scenes appear instantly, objects look sharper, and game play is super smooth, giving you a stunning visual experience and a serious competitive edge.

the problem: old tech

when tvs were first developed they relied on crts which work by scanning a beam of electrons across the surface of a phosphorus tube. this beam causes a pixel on the tube to glow, and when enough pixels are activated quickly enough the crt can give the impression of full motion video. believe it or not, these early tvs had 60hz refresh rates primarily because the united states power grid is based on 60hz ac power. matching tv refresh rates to that of the power grid made early electronics easier to build, and reduced power interference on the screen.

by the time pcs came to market in the early 1980s, crt tv technology was well established and was the easiest and most cost effective technology for utilize for the creation of dedicated computer monitors. 60hz and fixed refresh rates became standard, and system builders learned how to make the most of a less than perfect situation. over the past three decades, even as display technology has evolved from crts to lcd and leds, no major company has challenged this thinking, and so syncing gpus to monitor refresh rates remains the standard practice across the industry to this day.

problematically, graphics cards don’t render at fixed speeds. in fact, their frame rates will vary dramatically even within a single scene of a single game, based on the instantaneous load that the gpu sees. so with a fixed refresh rate, how do you get the gpu images to the screen? the first way is to simply ignore the refresh rate of the monitor altogether, and update the image being scanned to the display in mid cycle. this we call ‘vsync off mode’ and it is the default way most gamers play. the downside is that when a single refresh cycle show 2 images, a very obvious “tear line” is evident at the break, commonly referred to as screen tearing. the established solution to screen tearing is to turn vsync on, to force the gpu to delay screen updates until the monitor cycles to the start of a new refresh cycle. this causes stutter whenever the gpu frame rate is below the display refresh rate. and it also increases latency, which introduces input lag, the visible delay between a button being pressed and the result occurring on-screen.

worse still, many players suffer eyestrain when exposed to persistent vsync stuttering, and others develop headaches and migraines, which drove us to develop adaptive vsync, an effective, critically-acclaimed solution. despite this development, vsync’s input lag issues persist to this day, something that’s unacceptable for many enthusiasts, and an absolute no-go for esports pro-gamers who custom-pick their gpus, monitors, keyboards, and mice to minimize the life-and-death delay between action and reaction.

enter nvidia g-sync, which eliminates screen tearing, vsync input lag, and stutter. to achieve this revolutionary feat, we build a g-sync module into monitors, allowing g-sync to synchronize the monitor to the output of the gpu, instead of the gpu to the monitor, resulting in a tear-free, faster, smoother experience that redefines gaming.

industry luminaries john carmack, tim sweeney, johan andersson and mark rein have been bowled over by nvidia g-sync’s game-enhancing technology. pro esports players and pro-gaming leagues are lining up to use nvidia g-sync, which will expose a player’s true skill, demanding even greater reflexes thanks to the unnoticeable delay between on-screen actions and keyboard commands. and in-house, our diehard gamers have been dominating lunchtime lan matches, surreptitiously using g-sync monitors to gain the upper hand.

online, if you have a nvidia g-sync monitor you’ll have a clear advantage over others, assuming you also have a low ping.

how to upgrade to g-sync

if you’re as excited by nvidia g-sync as we are, and want to get your own g-sync monitor, you can buy a modded monitor now. select nvidia system builders will be offering asus vg248qe monitor

how to upgrade to g-sync

if you’re as excited by nvidia g-sync as we are, and want to get your own g-sync monitor, you can buy a modded monitor now.

select nvidia system builders will be offering asus vg248qe monitors that have been specially upgraded with an nvidia g-sync module. these are now available to buy here.

videos

nvidia g-sync is ground breaking new display technology that delivers the smoothest and fastest gaming experience ever.

g-sync’s revolutionary performance is achieved by synchronizing display refresh rates to the gpu in your geforce gtx-powered pc, eliminating screen tearing and minimizing display stutter and input lag.

the result: scenes appear instantly, objects look sharper, and game play is super smooth, giving you a stunning visual experience and a serious competitive edge.

the problem: old tech

when tvs were first developed they relied on crts which work by scanning a beam of electrons across the surface of a phosphorus tube. this beam causes a pixel on the tube to glow, and when enough pixels are activated quickly enough the crt can give the impression of full motion video. believe it or not, these early tvs had 60hz refresh rates primarily because the united states power grid is based on 60hz ac power. matching tv refresh rates to that of the power grid made early electronics easier to build, and reduced power interference on the screen.

by the time pcs came to market in the early 1980s, crt tv technology was well established and was the easiest and most cost effective technology for utilize for the creation of dedicated computer monitors. 60hz and fixed refresh rates became standard, and system builders learned how to make the most of a less than perfect situation. over the past three decades, even as display technology has evolved from crts to lcd and leds, no major company has challenged this thinking, and so syncing gpus to monitor refresh rates remains the standard practice across the industry to this day.

problematically, graphics cards don’t render at fixed speeds. in fact, their frame rates will vary dramatically even within a single scene of a single game, based on the instantaneous load that the gpu sees. so with a fixed refresh rate, how do you get the gpu images to the screen? the first way is to simply ignore the refresh rate of the monitor altogether, and update the image being scanned to the display in mid cycle. this we call ‘vsync off mode’ and it is the default way most gamers play. the downside is that when a single refresh cycle show 2 images, a very obvious “tear line” is evident at the break, commonly referred to as screen tearing. the established solution to screen tearing is to turn vsync on, to force the gpu to delay screen updates until the monitor cycles to the start of a new refresh cycle. this causes stutter whenever the gpu frame rate is below the display refresh rate. and it also increases latency, which introduces input lag, the visible delay between a button being pressed and the result occurring on-screen.

worse still, many players suffer eyestrain when exposed to persistent vsync stuttering, and others develop headaches and migraines, which drove us to develop adaptive vsync, an effective, critically-acclaimed solution. despite this development, vsync’s input lag issues persist to this day, something that’s unacceptable for many enthusiasts, and an absolute no-go for esports pro-gamers who custom-pick their gpus, monitors, keyboards, and mice to minimize the life-and-death delay between action and reaction.

enter nvidia g-sync, which eliminates screen tearing, vsync input lag, and stutter. to achieve this revolutionary feat, we build a g-sync module into monitors, allowing g-sync to synchronize the monitor to the output of the gpu, instead of the gpu to the monitor, resulting in a tear-free, faster, smoother experience that redefines gaming.

industry luminaries john carmack, tim sweeney, johan andersson and mark rein have been bowled over by nvidia g-sync’s game-enhancing technology. pro esports players and pro-gaming leagues are lining up to use nvidia g-sync, which will expose a player’s true skill, demanding even greater reflexes thanks to the unnoticeable delay between on-screen actions and keyboard commands. and in-house, our diehard gamers have been dominating lunchtime lan matches, surreptitiously using g-sync monitors to gain the upper hand.

online, if you have a nvidia g-sync monitor you’ll have a clear advantage over others, assuming you also have a low ping.

how to upgrade to g-sync

if you’re as excited by nvidia g-sync as we are, and want to get your own g-sync monitor, you can buy a modded monitor now. select nvidia system builders will be offering asus vg248qe monitor

how to upgrade to g-sync

if you’re as excited by nvidia g-sync as we are, and want to get your own g-sync monitor, you can buy a modded monitor now.

select nvidia system builders will be offering asus vg248qe monitors that have been specially upgraded with an nvidia g-sync module. these are now available to buy here.

videos

physx

what is nvidia physx technology?

nvidia® physx® is a powerful physics engine enabling real-time physics in leading edge pc games.

physx software is widely adopted by over 150 games and is used by more than 10,000 developers. physx is optimized for hardware acceleration by massively parallel processors.

geforce gpus with physx provide an exponential increase in physics processing power taking gaming physics to the next level.

what is physics for gaming and why is it important?

physics is the next big thing in gaming. it's all about how objects in your game move, interact, and react to the environment around them. without physics in many of today's games, objects just don't seem to act the way you'd want or expect them to in real life. currently, most of the action is limited to pre-scripted or ‘canned' animations triggered by in-game events like a gunshot striking a wall. even the most powerful weapons can leave little more than a smudge on the thinnest of walls; and every opponent you take out, falls in the same pre-determined fashion.

players are left with a game that looks fine, but is missing the sense of realism necessary to make the experience truly immersive. with nvidia physx technology, game worlds literally come to life: walls can be torn down, glass can be shattered, trees bend in the wind, and water flows with body and force.

nvidia geforce gpus with physx deliver the computing horsepower necessary to enable true, advanced physics in the next generation of game titles making canned animation effects a thing of the past.

which nvidia geforce gpus support physx?

the minimum requirement to support gpu-accelerated physx is a geforce 8-series or later gpu with a minimum of 32 cores and a minimum of 256mb dedicated graphics memory. however, each physx application has its own gpu and memory recommendations. in general, 512mb of graphics memory is recommended unless you have a gpu that is dedicated to physx.

how does physx work with sli and multi-gpu configurations?

when two, three, or four matched gpus are working in sli, physx runs on one gpu, while graphics rendering runs on all gpus. the nvidia drivers optimize the available resources across all gpus to balance physx computation and graphics rendering. therefore users can expect much higher frame rates and a better overall experience with sli.

a new configuration that’s now possible with physx is 2 non-matched (heterogeneous) gpus. in this configuration, one gpu renders graphics (typically the more powerful gpu) while the second gpu is completely dedicated to physx. by offloading physx to a dedicated gpu, users will experience smoother gaming.

why is a gpu good for physics processing?

the multithreaded physx engine was designed specifically for hardware acceleration in massively parallel environments.

gpus are the natural place to compute physics calculations because, like graphics, physics processing is driven by thousands of parallel computations.

today, nvidia's gpus, have as many as 480 cores, so they are well-suited to take advantage of physx software.

nvidia is committed to making the gaming experience exciting, dynamic, and vivid. the combination of graphics and physics impacts the way a virtual world looks and behaves.

can i use an nvidia gpu as a physx processor and a non-nvidia gpu for regular display graphics?

no. there are multiple technical connections between physx processing and graphics that require tight collaboration between the two technologies.

to deliver a good experience for users, nvidia physx technology has been fully verified and enabled using only nvidia gpus for graphics.

can i use an amd card?

physx supports both cpu and gpu simulation. gpu simulation is only available on nvidia graphics card irrespective of cpu.

well written application usually check for gpu simulation support and allow for cpu fallback if its not present.

however, cpu physx can seriously effect framerate

games that support physx

see link below:

http://physxinfo.com/index.php?p=gam&f=pc

videos:

sli

quick understanding

sli is the technology that combats amd's crossfire technology

what is sli

nvidia sli intelligently scales graphics performance by combining multiple geforce gpus on an sli certified motherboard. with over 1,000 supported applications and used by over 94% of multi-gpu pcs on steam, sli is the technology of choice for gamers who demand the very best.

sli features an intelligent communication protocol embedded in the gpu, a high-speed digital interface to facilitate data flow between the two graphics cards, and a complete software suite providing dynamic load balancing, advanced rendering, and compositing to ensure maximum compatibility and performance in today’s latest games.

features

beyond just better performance, sli offers a host of advanced features. for physx games, sli can assign the second gpu for physics computation, enabling stunning effects such as life-like fluids, particles, and destruction. for cuda applications, a second gpu can be used for compute purposes such as folding@home or video transcoding. finally, for the ultimate in image quality, sli antialiasing offers up to 64xaa with two gpus, 96xaa with three gpus, or 128xaa with four gpus.

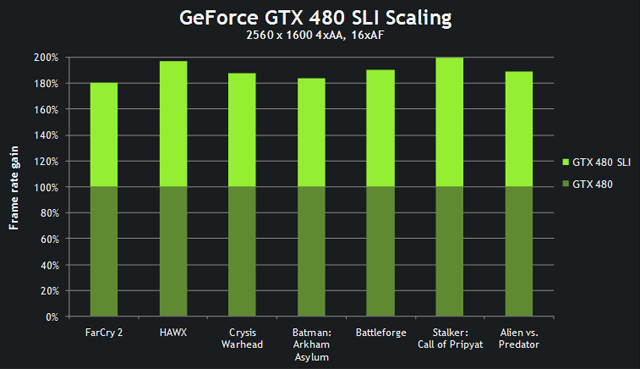

scaling

thanks to fermi’s architectural innovations, sli scaling is higher than ever. across many popular titles, over 80%, and at times 100% performance improvement, can be obtained by adding a second gpu.

not just multiple video cards

when sli was first introduced the technology was used only to connect multiple video cards. in 2005, however, gigabyte introduced a video card that used sli technology to connect two different nvidia gpus located on the same video card.

this arrangement has become more common over time. both nvidia and amd have released reference design cards featuring two gpus in the same video card connected via sli or crossfire.

this has confused things a bit because two video cards with two gpus each would technically be a quad-sli arrangement even though only two video cards are involved. with that said, these cards are expensive and thus rare, so you can generally assume that if someone is talking about sli they are talking about the use of two or more video cards.

sli usually describes a desktop solution but it is available in gaming laptops. amd sometimes pairs its apus with a discrete radeon gpu, which means you’ll sometimes run across crossfire laptops that only cost $600 to $800 bucks.

nvidia has also paired a discrete gpu with an integrated gpu in the past. this was branded with the term hybrid sli. nvidia was forced out of the chipset business soon after, however, which meant the company no longer offered integrated graphics. hybrid sli is effectively dead as a result.

photos

videos

everything you need to know about sli

benchmarks & guide

titan quad sli demo

sli is the technology that combats amd's crossfire technology

what is sli

nvidia sli intelligently scales graphics performance by combining multiple geforce gpus on an sli certified motherboard. with over 1,000 supported applications and used by over 94% of multi-gpu pcs on steam, sli is the technology of choice for gamers who demand the very best.

sli features an intelligent communication protocol embedded in the gpu, a high-speed digital interface to facilitate data flow between the two graphics cards, and a complete software suite providing dynamic load balancing, advanced rendering, and compositing to ensure maximum compatibility and performance in today’s latest games.

features

beyond just better performance, sli offers a host of advanced features. for physx games, sli can assign the second gpu for physics computation, enabling stunning effects such as life-like fluids, particles, and destruction. for cuda applications, a second gpu can be used for compute purposes such as folding@home or video transcoding. finally, for the ultimate in image quality, sli antialiasing offers up to 64xaa with two gpus, 96xaa with three gpus, or 128xaa with four gpus.

scaling

thanks to fermi’s architectural innovations, sli scaling is higher than ever. across many popular titles, over 80%, and at times 100% performance improvement, can be obtained by adding a second gpu.

not just multiple video cards

when sli was first introduced the technology was used only to connect multiple video cards. in 2005, however, gigabyte introduced a video card that used sli technology to connect two different nvidia gpus located on the same video card.

this arrangement has become more common over time. both nvidia and amd have released reference design cards featuring two gpus in the same video card connected via sli or crossfire.

this has confused things a bit because two video cards with two gpus each would technically be a quad-sli arrangement even though only two video cards are involved. with that said, these cards are expensive and thus rare, so you can generally assume that if someone is talking about sli they are talking about the use of two or more video cards.

sli usually describes a desktop solution but it is available in gaming laptops. amd sometimes pairs its apus with a discrete radeon gpu, which means you’ll sometimes run across crossfire laptops that only cost $600 to $800 bucks.

nvidia has also paired a discrete gpu with an integrated gpu in the past. this was branded with the term hybrid sli. nvidia was forced out of the chipset business soon after, however, which meant the company no longer offered integrated graphics. hybrid sli is effectively dead as a result.

photos

videos

everything you need to know about sli

nvidia gameworks™

pushes the limits of gaming by providing a more interactive and cinematic game experience and thus enabling next gen gaming for current games.

we provide technologies e.g. physx and visualfx, which are easy to integrate into games as well as tutorials and tools to quickly generate game content.

in addition we also provide tools to debug, profile and optimize your code.

upcoming technology

nvidia flameworks enables cinematic smoke, fire and explosions. it combines a state-of-the-art grid based fluid simulator with an efficient volume rendering engine.

the system is highly customizable, and supports user-defined emitters, force fields, and collision objects

physx flex is a particle based simulation technique for real-time visual effects. it will be introduced as a new feature in the upcoming physx sdk v3.4.

the flex pipeline encapsulates a highly parallel constraint solver that exploits the gpu’s compute capabilities effectively.

nvidia gameworks technology in released games

call of duty: ghosts is using nvidia hairworks to provide a more realistic riley and wolves. each hair asset has about 400-500k hair strands.

most of these hair assets are created on the fly inside the gpu from roughly 10k guide hairs.

additional technologies used in the game are nvidia turbulence for the smoke bombs, as well as txaa.

batman arkham origins is loaded with nvidia gameworks technologies; nvidia turbulence for the snow, steam/fog and shock gloves as well as physx cloth for ambient cloth.

in addition nvidia shadowworks for hbao+ and advanced soft shadows and nvidia cameraworks for txaa and dof.

we provide technologies e.g. physx and visualfx, which are easy to integrate into games as well as tutorials and tools to quickly generate game content.

in addition we also provide tools to debug, profile and optimize your code.

upcoming technology

nvidia flameworks enables cinematic smoke, fire and explosions. it combines a state-of-the-art grid based fluid simulator with an efficient volume rendering engine.

the system is highly customizable, and supports user-defined emitters, force fields, and collision objects

physx flex is a particle based simulation technique for real-time visual effects. it will be introduced as a new feature in the upcoming physx sdk v3.4.

the flex pipeline encapsulates a highly parallel constraint solver that exploits the gpu’s compute capabilities effectively.

nvidia gameworks technology in released games

call of duty: ghosts is using nvidia hairworks to provide a more realistic riley and wolves. each hair asset has about 400-500k hair strands.

most of these hair assets are created on the fly inside the gpu from roughly 10k guide hairs.

additional technologies used in the game are nvidia turbulence for the smoke bombs, as well as txaa.

batman arkham origins is loaded with nvidia gameworks technologies; nvidia turbulence for the snow, steam/fog and shock gloves as well as physx cloth for ambient cloth.

in addition nvidia shadowworks for hbao+ and advanced soft shadows and nvidia cameraworks for txaa and dof.

shadowplay

shadowplay from nvidia

don't just brag about your gaming wins. show the world with geforce shadowplay.

available only with nvidia® geforce experience™, shadowplay records game action as you play—automatically—with minimal impact on performance. it's fast, simple, and free!

shadow every game.

share every victory.

shadowplay records the up to the last 20 minutes of your gameplay.

just pulled off an amazing stunt? hit a hotkey and the game video will be saved to disk. or, use the manual mode to capture video for as long as you like.

you now have a video record of your gaming awesomeness to share or post anywhere.

shadowplay even lets you instantly broadcast your gameplay in an hd-quality stream through twitch.tv.

how it works

shadowplay has two user-configurable modes. the first, shadow mode, continuously records your gameplay, saving up to 20 minutes of high-quality 1920×1080 footage to a temporary file.

so, if you pull off a particularly impressive move in-game, just hit the user-defined hotkey and the footage will be saved to your chosen directory.

the file can then be edited with the free windows movie maker application, or any other .mp4-compatible video editor, and uploaded to youtube to share with friends or gamers galore.

alternatively, in manual mode, which acts like traditional gameplay recorders, you can save your entire session to disk.

the beauty of shadowplay is that, because it takes advantage of the hardware built into every gtx gpu, you don’t have to worry about any major impact on frame rates compared to other, existing applications.

features

gpu accelerated h.264 video encoder

records up to the last 20 minutes of gameplay in shadow mode

records unlimited length video in manual mode

broadcasts to twitch

outputs 1080p at up to 50 mbps

minimal performance impact

full desktop capture (desktop gpus only)

requirements:

general system requirements

operating system:

windows 8, 8.1

windows 7

windows vista (directx 11 runtime required)

windows xp sp3 (driver updates only)

ram:

2gb system memory

supported hardware:

cpu:

intel pentium g series, core 2 duo, quad core i3, i5, i7, or higher

amd phenom ii, athlon ii, phenom x4, fx or higher

disk space required:

20mb minimum

internet connectivity:

required

display resolution:

any display with 1024x768 to 3840×2160 resolution

gpu:

gpu series driver updates game optimization shadowplay and shield pc streaming

geforce titan, gtx 700,

gtx 600 yes yes yes

geforce gtx 800m, gtx 700m, select gtx 600m yes yes yes

geforce 800, 700, 600, 500, 400 yes yes no

geforce 600m, 500m, 400m yes yes no

geforce 300, 200, 100, 9, 8 yes no no

geforce 300m, 200m, 100m, 9m, 8m yes no no

- see more at: http://blogs.nvidia.com/blog/2013/10/18/shadowplay/#sthash.l0gzgizw.dpuf

don't just brag about your gaming wins. show the world with geforce shadowplay.

available only with nvidia® geforce experience™, shadowplay records game action as you play—automatically—with minimal impact on performance. it's fast, simple, and free!

shadow every game.

share every victory.

shadowplay records the up to the last 20 minutes of your gameplay.

just pulled off an amazing stunt? hit a hotkey and the game video will be saved to disk. or, use the manual mode to capture video for as long as you like.

you now have a video record of your gaming awesomeness to share or post anywhere.

shadowplay even lets you instantly broadcast your gameplay in an hd-quality stream through twitch.tv.

how it works

shadowplay has two user-configurable modes. the first, shadow mode, continuously records your gameplay, saving up to 20 minutes of high-quality 1920×1080 footage to a temporary file.

so, if you pull off a particularly impressive move in-game, just hit the user-defined hotkey and the footage will be saved to your chosen directory.

the file can then be edited with the free windows movie maker application, or any other .mp4-compatible video editor, and uploaded to youtube to share with friends or gamers galore.

alternatively, in manual mode, which acts like traditional gameplay recorders, you can save your entire session to disk.

the beauty of shadowplay is that, because it takes advantage of the hardware built into every gtx gpu, you don’t have to worry about any major impact on frame rates compared to other, existing applications.

features

gpu accelerated h.264 video encoder

records up to the last 20 minutes of gameplay in shadow mode

records unlimited length video in manual mode

broadcasts to twitch

outputs 1080p at up to 50 mbps

minimal performance impact

full desktop capture (desktop gpus only)

requirements:

general system requirements

operating system:

windows 8, 8.1

windows 7

windows vista (directx 11 runtime required)

windows xp sp3 (driver updates only)

ram:

2gb system memory

supported hardware:

cpu:

intel pentium g series, core 2 duo, quad core i3, i5, i7, or higher

amd phenom ii, athlon ii, phenom x4, fx or higher

disk space required:

20mb minimum

internet connectivity:

required

display resolution:

any display with 1024x768 to 3840×2160 resolution

gpu:

gpu series driver updates game optimization shadowplay and shield pc streaming

geforce titan, gtx 700,

gtx 600 yes yes yes

geforce gtx 800m, gtx 700m, select gtx 600m yes yes yes

geforce 800, 700, 600, 500, 400 yes yes no

geforce 600m, 500m, 400m yes yes no

geforce 300, 200, 100, 9, 8 yes no no

geforce 300m, 200m, 100m, 9m, 8m yes no no

- see more at: http://blogs.nvidia.com/blog/2013/10/18/shadowplay/#sthash.l0gzgizw.dpuf

3d vision

3d vision vision

http://www.nvidia.co.uk/object/3d-vision-main.html

3D Vision (previously GeForce 3D Vision) is a stereoscopic gaming kit from Nvidia which consists of LC shutter glasses and driver software which enables stereoscopic vision for any Direct3D game,

with various degrees of compatibility. There have been many examples of shutter glasses over the past decade, but the NVIDIA 3D Vision gaming kit introduced in 2008 made this technology available for mainstream consumers and PC gamers.

The kit is specially designed for 120 Hz LCD monitors but is compatible with CRT monitors (some of which may work at 1024×768×120 Hz and even higher refresh rates), DLP-projectors, and others.

It requires a compatible graphics card from Nvidia (GeForce 200 series or later).

Shutter Glasses

The glasses use wireless IR protocol and can be charged from a USB cable, allowing around 60 hours of continuous use.

The wireless emitter connects to the USB port and interfaces with the underlying driver software. It also contains a VESA Stereo port for connecting supported DLP TV sets, although standalone operation without a PC with installed Nvidia 3D Vision driver is not allowed.

NVIDIA includes one pair of shutter glasses in their 3D Vision kit, SKU 942-10701-0003. Each lens operates at 60 Hz, and alternate to create a 120 Hz 3-dimensional experience.

This version of 3D Vision supports select 120 Hz monitors, 720p DLP projectors, and passive-polarized displays from Zalman.

Stereo Driver

The stereo driver software can perform automatic stereoscopic conversion by using the 3D models submitted by the application and rendering two stereoscopic views instead of the standard mono view. The automatic driver works in two modes: fully "automatic" mode, where 3D Vision driver controls screen depth (convergence) and stereo separation, and "explicit" mode, where control over screen depth, separation, and textures is performed by the game developer with the use of proprietary NVAPI.

The quad-buffered mode allows developers to control the rendering, avoiding the automatic mode of the driver and just presenting the rendered stereo picture to left and right frame buffers with associated back buffers.

3D Vision Requirements

see here:

http://www.nvidia.com/object/3d-vision-system-requirements.html

What is supported? (Games/Web/Photos/Blu-Rays)

see here for the full list:

http://www.nvidia.com/object/3d-vision-games.html

Videos:

Setup/Install

Performance Review

http://www.nvidia.co.uk/object/3d-vision-main.html

3D Vision (previously GeForce 3D Vision) is a stereoscopic gaming kit from Nvidia which consists of LC shutter glasses and driver software which enables stereoscopic vision for any Direct3D game,

with various degrees of compatibility. There have been many examples of shutter glasses over the past decade, but the NVIDIA 3D Vision gaming kit introduced in 2008 made this technology available for mainstream consumers and PC gamers.

The kit is specially designed for 120 Hz LCD monitors but is compatible with CRT monitors (some of which may work at 1024×768×120 Hz and even higher refresh rates), DLP-projectors, and others.

It requires a compatible graphics card from Nvidia (GeForce 200 series or later).

Shutter Glasses

The glasses use wireless IR protocol and can be charged from a USB cable, allowing around 60 hours of continuous use.

The wireless emitter connects to the USB port and interfaces with the underlying driver software. It also contains a VESA Stereo port for connecting supported DLP TV sets, although standalone operation without a PC with installed Nvidia 3D Vision driver is not allowed.

NVIDIA includes one pair of shutter glasses in their 3D Vision kit, SKU 942-10701-0003. Each lens operates at 60 Hz, and alternate to create a 120 Hz 3-dimensional experience.

This version of 3D Vision supports select 120 Hz monitors, 720p DLP projectors, and passive-polarized displays from Zalman.

Stereo Driver

The stereo driver software can perform automatic stereoscopic conversion by using the 3D models submitted by the application and rendering two stereoscopic views instead of the standard mono view. The automatic driver works in two modes: fully "automatic" mode, where 3D Vision driver controls screen depth (convergence) and stereo separation, and "explicit" mode, where control over screen depth, separation, and textures is performed by the game developer with the use of proprietary NVAPI.

The quad-buffered mode allows developers to control the rendering, avoiding the automatic mode of the driver and just presenting the rendered stereo picture to left and right frame buffers with associated back buffers.

3D Vision Requirements

see here:

http://www.nvidia.com/object/3d-vision-system-requirements.html

What is supported? (Games/Web/Photos/Blu-Rays)

see here for the full list:

http://www.nvidia.com/object/3d-vision-games.html

Videos:

Setup/Install

Performance Review

NVIDIA SURROUND

NVIDIA Surround Technology

In NVIDIA Surround three displays are supported up to 2560x1600 resolution on each display,

the same as AMD’s Eyefinity, although Eyefinity can currently support up to six displays at 2560x1600 each.

How many displays do I need to run 3D Vision Surround?

3D Vision Surround requires three displays or projectors. You can use a variety of displays, including LCD monitors, TVs, or projectors. Please view the 3D Vision Surround system requirements for a full list of supported products

Please note: NVIDIA 3D Vision Surround and NVIDIA Surround does not support a two display surround configuration. Both NVIDIA 3D Vision Surround and NVIDIA Surround require three supported displays as defined in the system requirements above.

Does 3D Vision Surround support 2D displays?

Yes, you can run Surround in 2D mode. We call this mode Surround (2D). Make sure that 3D Vision is disabled from the Stereoscopic 3D control panel to use Surround (2D) mode.

What GPUs does 3D Vision Surround support?

Please view the 3D Vision Surround system requirements for a full list of supported products.

Please note that in the first Beta v258.69 driver for 3D Vision Surround, we do not recommend running GeForce GTX 295 Quad SLI because there are bugs in the driver which may result in system instability. We will be providing a future driver update to support this configuration.

Can I use HDMI connectors to run 3D Vision Surround?

3D Vision-Ready LCDs require dual-link DVI connectors and will not work with HDMI connectors. However, you can use HDMI connectors with 3D Vision Projectors that support HDMI input.

Note: 3D DLP HDTVs do use HDMI connectors, but 3D Vision Surround does not support using 3D DLP TVs.

What resolutions does Surround support?

Surround requires three displays to operate. Surround resolutions are calculated by arranging your monitors in portrait or landscape mode and then multiplying the horizontal resolution by three times a single display in that mode (portrait or landscape).

The following table illustrates some common aspect ratios and resolutions:

1280x1024

3840x1024

3072x1280

1920x1080

5760x1080

3240x1920

1920x1200

5760x1200

3600x1920

Note: 3D Vision-Ready LCDs do not support portrait mode. However, you can run 3D Vision Surround with 3D projectors in portrait mode.

Can I use 3D Vision Surround with three displays and setup an Accessory display that is not in 3D?

At this time, accessory displays are only supported in Surround (2D) configuration.

In NVIDIA Surround three displays are supported up to 2560x1600 resolution on each display,

the same as AMD’s Eyefinity, although Eyefinity can currently support up to six displays at 2560x1600 each.

How many displays do I need to run 3D Vision Surround?

3D Vision Surround requires three displays or projectors. You can use a variety of displays, including LCD monitors, TVs, or projectors. Please view the 3D Vision Surround system requirements for a full list of supported products

Please note: NVIDIA 3D Vision Surround and NVIDIA Surround does not support a two display surround configuration. Both NVIDIA 3D Vision Surround and NVIDIA Surround require three supported displays as defined in the system requirements above.

Does 3D Vision Surround support 2D displays?

Yes, you can run Surround in 2D mode. We call this mode Surround (2D). Make sure that 3D Vision is disabled from the Stereoscopic 3D control panel to use Surround (2D) mode.

What GPUs does 3D Vision Surround support?

Please view the 3D Vision Surround system requirements for a full list of supported products.

Please note that in the first Beta v258.69 driver for 3D Vision Surround, we do not recommend running GeForce GTX 295 Quad SLI because there are bugs in the driver which may result in system instability. We will be providing a future driver update to support this configuration.

Can I use HDMI connectors to run 3D Vision Surround?

3D Vision-Ready LCDs require dual-link DVI connectors and will not work with HDMI connectors. However, you can use HDMI connectors with 3D Vision Projectors that support HDMI input.

Note: 3D DLP HDTVs do use HDMI connectors, but 3D Vision Surround does not support using 3D DLP TVs.

What resolutions does Surround support?

Surround requires three displays to operate. Surround resolutions are calculated by arranging your monitors in portrait or landscape mode and then multiplying the horizontal resolution by three times a single display in that mode (portrait or landscape).

The following table illustrates some common aspect ratios and resolutions:

1280x1024

3840x1024

3072x1280

1920x1080

5760x1080

3240x1920

1920x1200

5760x1200

3600x1920

Note: 3D Vision-Ready LCDs do not support portrait mode. However, you can run 3D Vision Surround with 3D projectors in portrait mode.

Can I use 3D Vision Surround with three displays and setup an Accessory display that is not in 3D?

At this time, accessory displays are only supported in Surround (2D) configuration.

CUDA

CUDA™ is a parallel computing platform and programming model invented by NVIDIA. It enables dramatic increases in computing performance by harnessing the power of the graphics processing unit (GPU).

With millions of CUDA-enabled GPUs sold to date, software developers, scientists and researchers are finding broad-ranging uses for GPU computing with CUDA. Here are a few examples:

Identify hidden plaque in arteries: Heart attacks are the leading cause of death worldwide. Harvard Engineering, Harvard Medical School and Brigham & Women's Hospital have teamed up to use GPUs to simulate blood flow and identify hidden arterial plaque without invasive imaging techniques or exploratory surgery.

Analyze air traffic flow: The National Airspace System manages the nationwide coordination of air traffic flow. Computer models help identify new ways to alleviate congestion and keep airplane traffic moving efficiently.

Using the computational power of GPUs, a team at NASA obtained a large performance gain, reducing analysis time from ten minutes to three seconds.

Visualize molecules: A molecular simulation called NAMD (nanoscale molecular dynamics) gets a large performance boost with GPUs. The speed-up is a result of the parallel architecture of GPUs, which enables NAMD developers to port compute-intensive portions of the application to the GPU using the CUDA Toolkit.

Widely Used By Researchers

Since its introduction in 2006, CUDA has been widely deployed through thousands of applications and published research papers, and supported by an installed base of over 500 million CUDA-enabled GPUs in notebooks, workstations, compute clusters and supercomputers.

How to get started

Software developers, scientists and researchers can add support for GPU acceleration in their own applications using one of three simple approaches:

Drop in a GPU-accelerated library to replace or augment CPU-only libraries such as MKL BLAS, IPP, FFTW and other widely-used libraries

Automatically parallelize loops in Fortran or C code using OpenACC directives for accelerators

Develop custom parallel algorithms and libraries using a familiar programming language such as C, C++, C#, Fortran, Java, Python, etc.

What is NVIDIA Tesla™?

With the world’s first teraflop many-core processor, NVIDIA® Tesla™ computing solutions enable the necessary transition to energy efficient parallel computing power. With thousands of CUDA cores per processor , Tesla scales to solve the world’s most important computing challenges—quickly and accurately.

What is OpenACC?

OpenACC is an open industry standard for compiler directives or hints which can be inserted in code written in C or Fortran enabling the compiler to generate code which would run in parallel on multi-CPU and GPU accelerated system. OpenACC directives are easy and powerful way to leverage the power of GPU Computing while keeping your code compatible for non-accelerated CPU only systems. Learn more at https://developer.nvidia.com/openacc.

What kind of performance increase can I expect using GPU Computing over CPU-only code?

This depends on how well the problem maps onto the architecture. For data parallel applications, accelerations of more than two orders of mangitude have been seen. You can browse research, developer, applications and partners on our CUDA In Action Page

What operating systems does CUDA support?

CUDA supports Windows, Linux and Mac OS. For full list see the latest CUDA Toolkit Release Notes.The latest version is available at http://docs.nvidia.com

Which GPUs support running CUDA-accelerated applications?

CUDA is a standard feature in all NVIDIA GeForce, Quadro, and Tesla GPUs as well as NVIDIA GRID solutions. A full list can be found on the CUDA GPUs Page.

What is the "compute capability"?

The compute capability of a GPU determines its general specifications and available features. For a details, see the Compute Capabilities section in the CUDA C Programming Guide.

Where can I find a good introduction to parallel programming?

There are several university courses online, technical webinars, article series and also several excellent books on parallel computing. These can be found on our CUDA Education Page.

Hardware and Architecture

Will I have to re-write my CUDA Kernels when the next new GPU architecture is released?

No. CUDA C/C++ provides an abstraction; it’s a means for you to express how you want your program to execute. The compiler generates PTX code which is also not hardware specific. At runtime the PTX is compiled for a specific target GPU - this is the responsibility of the driver which is updated every time a new GPU is released. It is possible that changes in the number of registers or size of shared memory may open up the opportunity for further optimization but thats optional. So write your code now, and enjoy it running on future GPU's

Does CUDA support multiple graphics cards in one system?

Yes. Applications can distribute work across multiple GPUs. This is not done automatically, however, so the application has complete control. See the "multiGPU" example in the GPU Computing SDK for an example of programming multiple GPUs.

With millions of CUDA-enabled GPUs sold to date, software developers, scientists and researchers are finding broad-ranging uses for GPU computing with CUDA. Here are a few examples:

Identify hidden plaque in arteries: Heart attacks are the leading cause of death worldwide. Harvard Engineering, Harvard Medical School and Brigham & Women's Hospital have teamed up to use GPUs to simulate blood flow and identify hidden arterial plaque without invasive imaging techniques or exploratory surgery.

Analyze air traffic flow: The National Airspace System manages the nationwide coordination of air traffic flow. Computer models help identify new ways to alleviate congestion and keep airplane traffic moving efficiently.

Using the computational power of GPUs, a team at NASA obtained a large performance gain, reducing analysis time from ten minutes to three seconds.

Visualize molecules: A molecular simulation called NAMD (nanoscale molecular dynamics) gets a large performance boost with GPUs. The speed-up is a result of the parallel architecture of GPUs, which enables NAMD developers to port compute-intensive portions of the application to the GPU using the CUDA Toolkit.

Widely Used By Researchers

Since its introduction in 2006, CUDA has been widely deployed through thousands of applications and published research papers, and supported by an installed base of over 500 million CUDA-enabled GPUs in notebooks, workstations, compute clusters and supercomputers.

How to get started

Software developers, scientists and researchers can add support for GPU acceleration in their own applications using one of three simple approaches:

Drop in a GPU-accelerated library to replace or augment CPU-only libraries such as MKL BLAS, IPP, FFTW and other widely-used libraries

Automatically parallelize loops in Fortran or C code using OpenACC directives for accelerators

Develop custom parallel algorithms and libraries using a familiar programming language such as C, C++, C#, Fortran, Java, Python, etc.

What is NVIDIA Tesla™?

With the world’s first teraflop many-core processor, NVIDIA® Tesla™ computing solutions enable the necessary transition to energy efficient parallel computing power. With thousands of CUDA cores per processor , Tesla scales to solve the world’s most important computing challenges—quickly and accurately.

What is OpenACC?

OpenACC is an open industry standard for compiler directives or hints which can be inserted in code written in C or Fortran enabling the compiler to generate code which would run in parallel on multi-CPU and GPU accelerated system. OpenACC directives are easy and powerful way to leverage the power of GPU Computing while keeping your code compatible for non-accelerated CPU only systems. Learn more at https://developer.nvidia.com/openacc.

What kind of performance increase can I expect using GPU Computing over CPU-only code?

This depends on how well the problem maps onto the architecture. For data parallel applications, accelerations of more than two orders of mangitude have been seen. You can browse research, developer, applications and partners on our CUDA In Action Page

What operating systems does CUDA support?

CUDA supports Windows, Linux and Mac OS. For full list see the latest CUDA Toolkit Release Notes.The latest version is available at http://docs.nvidia.com

Which GPUs support running CUDA-accelerated applications?

CUDA is a standard feature in all NVIDIA GeForce, Quadro, and Tesla GPUs as well as NVIDIA GRID solutions. A full list can be found on the CUDA GPUs Page.

What is the "compute capability"?

The compute capability of a GPU determines its general specifications and available features. For a details, see the Compute Capabilities section in the CUDA C Programming Guide.

Where can I find a good introduction to parallel programming?

There are several university courses online, technical webinars, article series and also several excellent books on parallel computing. These can be found on our CUDA Education Page.

Hardware and Architecture

Will I have to re-write my CUDA Kernels when the next new GPU architecture is released?

No. CUDA C/C++ provides an abstraction; it’s a means for you to express how you want your program to execute. The compiler generates PTX code which is also not hardware specific. At runtime the PTX is compiled for a specific target GPU - this is the responsibility of the driver which is updated every time a new GPU is released. It is possible that changes in the number of registers or size of shared memory may open up the opportunity for further optimization but thats optional. So write your code now, and enjoy it running on future GPU's

Does CUDA support multiple graphics cards in one system?

Yes. Applications can distribute work across multiple GPUs. This is not done automatically, however, so the application has complete control. See the "multiGPU" example in the GPU Computing SDK for an example of programming multiple GPUs.

Nvidia Game Stream

PC GAMING MADE PORTABLE WITH NVIDIA GAMESTREAM™ TECHNOLOGY.

NVIDIA GameStream harnesses the power of the most advanced GeForce® GTX™ graphics cards or GRID cloud Beta and exclusive game-speed streaming technologies to bring low-latency PC gaming experience to your SHIELD portable.

With streaming support for over 100 of the hottest PC titles at up to 1080p and 60 frames per second, SHIELD offers a truly unique handheld gaming experience.

NEW: Remotely access your PC to play your games away from your home.*

Play your favorite PC games on your HDTV at up to 1080p at 60 FPS using a wireless Bluetooth controller with SHIELD Console Mode.

NEW: Stream games like World of Warcraft, League of Legends, and DOTA 2 to your HDTV using Wi-Fi and play with full Bluetooth keyboard and mouse support.

Download GeForce Experience to see if your PC is ready for.

How GAMESTREAM Works

NVIDIA uses the H.264 encoder built into GeForce GTX 650 or higher desktop GPUs and GeForce GTX Kepler and Maxwell notebooks, along with efficient wireless streaming software protocol integrated into GeForce Experience, to stream games from the PC to SHIELD over the user's home Wi-Fi network with ultra-low latency. Gamers then use SHIELD as the controller and display for their favorite PC games, as well as for Steam Big Picture.

In addition to streaming the game, NVIDIA also configures PC games for streaming using GeForce Experience to deliver a seamless out of the box experience. There are three key activities involved here:

Generating optimal game settings for streaming games from the PC to SHIELD

We use our GeForce Experience servers to determine the best quality settings based on the user's CPU and GPU, and target higher frame rates than 'normal' optimal settings to ensure the lowest latency gaming experience. These settings are automatically applied when the game is launched so gamers don't have to worry about configuring these settings themselves.

Enabling controller support in games

This avoids gamers having to manually configure controller support in the game – it 'just works' out of the box.

Optimizing the game launch process

This is important so that gamers can get into the game as quickly and seamlessly as possible, without hitting launchers or other 2 foot UI interactions that can be difficult to interface with a controller.

Steam Big Picture

Big Picture shows up as a selection in the list of SHIELD-optimized games. Launching it gives you access to your Steam games – both supported and unsupported – plus the Steam Store and all the Steam community features. Supported games are recommended for the best, optimized streaming experience – the latest list of supported games can be found on SHIELD.nvidia.com starting at launch.

Unsupported games may work if they have native controller support, but will not be optimized for streaming and may have other streaming compatibility issues.

System Requirements for PC Game Streaming:

> GPU:

- Desktop: GeForce GTX 650 or higher GPU, or

- Notebook (Beta): GeForce GTX 800M, GTX 700M and select Kepler-based GTX 600M GPUs

> CPU: Intel Core i3-2100 3.1GHz or AMD Athlon II X4 630 2.8 GHz or higher

> System Memory: 4 GB or higher

> Software: GeForce Experience™ application and latest GeForce drivers

> OS: Windows 8 or Windows 7

> Routers: 802.11a/g router (minimum). 802.11n dual band router (recommended). See list of GameStream ready routers.

GameStream Ready Games

http://shield.nvidia.com/pc-game-list/

Setup Guide

http://www.geforce.com/whats-new/guides/nvidia-shield-user-guide#3

Nvidia Workstation cards

QUADRO ADVANTAGE

The NVIDIA® Quadro® family of products is designed and built specifically for professional workstations, powering more than 200 professional applications across a broad range of industries. From Manufacturing, Sciences and Medical Imaging, and Energy, to Media and Entertainment, Quadro solutions deliver unbeatable performance and reliability that make them the graphics processors of choice for professionals around the globe.

DISCOVER THE POWER OF NVIDIA KEPLER™ ARCHITECTURE

Get the power to realise your vision with the new family of NVIDIA Quadro professional graphics. They’re fueled by Kepler, NVIDIA’s most powerful GPU architecture ever, bringing a whole new level of performance and innovative capabilities to modern workstations.

Whether you’re creating revolutionary products, designing groundbreaking architecture, navigating massive geological datasets, or telling spectacularly vivid visual stories, Quadro graphics solutions give you the power to do it better and faster.

DISCOVER THE POWER OF NVIDIA KEPLER™ ARCHITECTURE

Guaranteed compatibility through support for the latest OpenGL, DirectX, and NVIDIA CUDA® standards, deep professional software developer engagements, and certification with over 200 applications by software companies.

Maximised performance through the unique capabilities of the latest Kepler GPU—including the SMX next-generation multiprocessor engine, advanced temporal anti-aliasing (TXAA) and fast approximate anti-aliasing (FXAA) modes, and innovative bindless textures technology—as well as larger on-board GPU memory and optimised software drivers.

Transformed and accelerated workflows that drive faster time-to-results in product design or digital content creation. This is made possible through simultaneous design and rendering or simulation using Quadro and NVIDIA Tesla® cards in the same system—called NVIDIA Maximus™.

REIMAGINE THE VISUAL WORKSPACE

REIMAGINE THE VISUAL WORKSPACE

Quadro solutions combine the most advanced display technologies and ecosystem interfaces to provide the ultimate visual workspace for maximum productivity.

Stunning image quality with movie-quality antialiasing techniques and enhanced color depth, higher refresh rates, and ultra-high screen resolution offered by the DisplayPort standard.

Simplified display scaling through increased display outputs per board, choice of display connections, and multi-display blending and synchronization made possible by NVIDIA Mosaic technology.

Enhanced desktop workspace across multiple displays using intuitive placement of windows, multiple virtual desktops, and user profiles offered by the NVIDIA nView® visual workspace manager.

GET PERFORMANCE YOU CAN TRUST. EVERY TIME

Reliability is core to all Quadro solutions, and one of the keys to the decade-long industry leadership of Quadro-powered professional desktops. Every product is designed to deliver the peace of mind you need to focus on what you do best—changing the way we all look at the world.

Highest-quality products through power-efficient hardware designs and component selection for optimum operational performance, durability, and longevity.

Simplified software driver deployment for the IT team through a regular cadence of long-life, stable driver releases and quality-assurance processes.

Maximum uptime through exhaustive testing in partnership with leading OEMs and system integrators that simulates the most demanding real-world conditions.

CREATE WITHOUT THE WAIT USING NVIDIA MAXIMUS

The NVIDIA Tesla-accelerated computing co-processor pairs with Quadro products to fundamentally transform traditional workflows. Take advantage of the NVIDIA Maximus solution to simulate and visualize more design options, explore more innovative entertainment ideas, and accelerate time to market for a competitive advantage.

Quadro graphics card for desktop workstations

Designed and built specifically for professional workstations, NVIDIA® Quadro® GPUs power more than 200 professional applications across a broad range of industries, including Manufacturing, Media and Entertainment, Sciences, and Energy. Professionals like you trust Quadro solutions to deliver the best possible experience with applications such as Adobe CS6, Avid Media Composer, Autodesk Inventor, Dassault Systemes CATIA and SolidWorks, Siemens NX, PTC Creo, and many more. For maximum application performance, add an NVIDIA Tesla® GPU to your workstation and experience the power of NVIDIA Maximus™ technology.

The cards

QUADRO K6000

The most powerful pro graphics on the planet built for tackling the largest visual projects, 12GB of on-board memory, advanced display capabilities for large-scale visualization, and support for high-performance video I/O.

Quadro K5000

Extreme performance to handle demanding workloads, 4 GB of on-board memory, advanced quad-display capabilities for large-scale visualization, and support for high-performance video I/O.

Quadro K5000 for Mac

Extreme performance on the Apple Mac Pro platform for accelerating professional design, animation, and video applications, 4 GB of on-board memory, and advanced quad-display capabilities for large-scale visualization.

Quadro K4000

Supercharged performance for graphics-intensive applications, large 3 GB on-board memory, multi-monitor support, and stereo capability in a single-slot configuration.

Quadro K2000

Outstanding performance with a range of professional applications, substantial 2 GB on-board memory to hold large models, and multi-monitor support for enhanced desktop productivity.

Quadro K2000D

Outstanding performance with a range of professional applications, dual-link DVI capability, 2 GB of GDDR5 memory, and multi-monitor support for enhanced desktop productivity.

Quadro K600

Great performance for leading professional applications, 1 GB of on-board memory, and a low- profile form factor for maximum usage flexibility.

Quadro 410

Entry-level professional graphics with ISV certifications.

references:

The NVIDIA® Quadro® family of products is designed and built specifically for professional workstations, powering more than 200 professional applications across a broad range of industries. From Manufacturing, Sciences and Medical Imaging, and Energy, to Media and Entertainment, Quadro solutions deliver unbeatable performance and reliability that make them the graphics processors of choice for professionals around the globe.

DISCOVER THE POWER OF NVIDIA KEPLER™ ARCHITECTURE

Get the power to realise your vision with the new family of NVIDIA Quadro professional graphics. They’re fueled by Kepler, NVIDIA’s most powerful GPU architecture ever, bringing a whole new level of performance and innovative capabilities to modern workstations.

Whether you’re creating revolutionary products, designing groundbreaking architecture, navigating massive geological datasets, or telling spectacularly vivid visual stories, Quadro graphics solutions give you the power to do it better and faster.

DISCOVER THE POWER OF NVIDIA KEPLER™ ARCHITECTURE

Guaranteed compatibility through support for the latest OpenGL, DirectX, and NVIDIA CUDA® standards, deep professional software developer engagements, and certification with over 200 applications by software companies.

Maximised performance through the unique capabilities of the latest Kepler GPU—including the SMX next-generation multiprocessor engine, advanced temporal anti-aliasing (TXAA) and fast approximate anti-aliasing (FXAA) modes, and innovative bindless textures technology—as well as larger on-board GPU memory and optimised software drivers.

Transformed and accelerated workflows that drive faster time-to-results in product design or digital content creation. This is made possible through simultaneous design and rendering or simulation using Quadro and NVIDIA Tesla® cards in the same system—called NVIDIA Maximus™.

REIMAGINE THE VISUAL WORKSPACE

REIMAGINE THE VISUAL WORKSPACE

Quadro solutions combine the most advanced display technologies and ecosystem interfaces to provide the ultimate visual workspace for maximum productivity.

Stunning image quality with movie-quality antialiasing techniques and enhanced color depth, higher refresh rates, and ultra-high screen resolution offered by the DisplayPort standard.

Simplified display scaling through increased display outputs per board, choice of display connections, and multi-display blending and synchronization made possible by NVIDIA Mosaic technology.

Enhanced desktop workspace across multiple displays using intuitive placement of windows, multiple virtual desktops, and user profiles offered by the NVIDIA nView® visual workspace manager.

GET PERFORMANCE YOU CAN TRUST. EVERY TIME

Reliability is core to all Quadro solutions, and one of the keys to the decade-long industry leadership of Quadro-powered professional desktops. Every product is designed to deliver the peace of mind you need to focus on what you do best—changing the way we all look at the world.

Highest-quality products through power-efficient hardware designs and component selection for optimum operational performance, durability, and longevity.

Simplified software driver deployment for the IT team through a regular cadence of long-life, stable driver releases and quality-assurance processes.

Maximum uptime through exhaustive testing in partnership with leading OEMs and system integrators that simulates the most demanding real-world conditions.

CREATE WITHOUT THE WAIT USING NVIDIA MAXIMUS

The NVIDIA Tesla-accelerated computing co-processor pairs with Quadro products to fundamentally transform traditional workflows. Take advantage of the NVIDIA Maximus solution to simulate and visualize more design options, explore more innovative entertainment ideas, and accelerate time to market for a competitive advantage.

Quadro graphics card for desktop workstations

Designed and built specifically for professional workstations, NVIDIA® Quadro® GPUs power more than 200 professional applications across a broad range of industries, including Manufacturing, Media and Entertainment, Sciences, and Energy. Professionals like you trust Quadro solutions to deliver the best possible experience with applications such as Adobe CS6, Avid Media Composer, Autodesk Inventor, Dassault Systemes CATIA and SolidWorks, Siemens NX, PTC Creo, and many more. For maximum application performance, add an NVIDIA Tesla® GPU to your workstation and experience the power of NVIDIA Maximus™ technology.

The cards

QUADRO K6000

The most powerful pro graphics on the planet built for tackling the largest visual projects, 12GB of on-board memory, advanced display capabilities for large-scale visualization, and support for high-performance video I/O.

Quadro K5000

Extreme performance to handle demanding workloads, 4 GB of on-board memory, advanced quad-display capabilities for large-scale visualization, and support for high-performance video I/O.

Quadro K5000 for Mac

Extreme performance on the Apple Mac Pro platform for accelerating professional design, animation, and video applications, 4 GB of on-board memory, and advanced quad-display capabilities for large-scale visualization.

Quadro K4000

Supercharged performance for graphics-intensive applications, large 3 GB on-board memory, multi-monitor support, and stereo capability in a single-slot configuration.

Quadro K2000

Outstanding performance with a range of professional applications, substantial 2 GB on-board memory to hold large models, and multi-monitor support for enhanced desktop productivity.

Quadro K2000D

Outstanding performance with a range of professional applications, dual-link DVI capability, 2 GB of GDDR5 memory, and multi-monitor support for enhanced desktop productivity.

Quadro K600

Great performance for leading professional applications, 1 GB of on-board memory, and a low- profile form factor for maximum usage flexibility.

Quadro 410

Entry-level professional graphics with ISV certifications.

references:

Video's

Last edited: