intel gave up on competing. Officially

Intel CEO gives up chasing market share

https://www.fudzilla.com/news/pc-hardware/50012-intel-ceo-gives-up-chasing-market-share

Yeah, Intel on that article says is the underdog also

Please remember that any mention of competitors, hinting at competitors or offering to provide details of competitors will result in an account suspension. The full rules can be found under the 'Terms and Rules' link in the bottom right corner of your screen. Just don't mention competitors in any way, shape or form and you'll be OK.

intel gave up on competing. Officially

Intel CEO gives up chasing market share

https://www.fudzilla.com/news/pc-hardware/50012-intel-ceo-gives-up-chasing-market-share

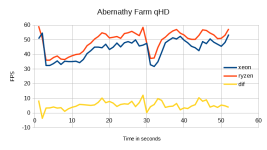

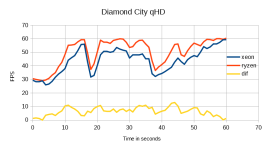

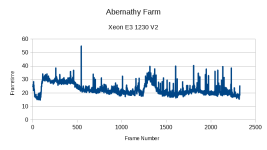

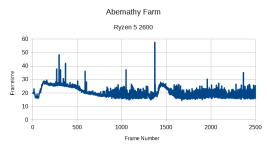

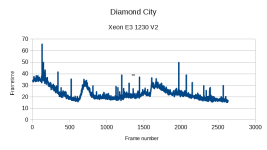

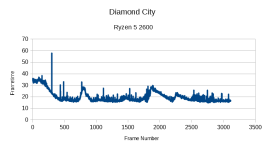

I went from 3770k to 3800X on my 1080ti and the smoothness was noticeable for a CPU upgrade and not just measurable. The 1% lows were much higher making for tangible feeling in game smoothness. I was surprised myself TBH.

You seeing good performance gains upgrading?

What % improvements are you seeing roughly though? Like what FPS did Tomb Raider used to run before you upgraded?

This is what is tempting me to upgrade too, I don't necessarily need better max/ average FPS but I want to smooth out some of the stutters I'm getting from the 1% lows - it's not so much just the CPU change but the whole platform shift as well; I'd be going from 1866 DDR3 to 3600 DDR4, PCIE 2 to PCIE 3 and Sata 3 to NVME - all while adding an extra 2/4 cores and more threads at the same time...But I changed to a modern processor and I guess to a fair extent some of the performance is down to the RAM too. And this really gives a performance boost to how your current GPU will work. Much smoother and an experience you can 'feel'. If your current CPU is anywhere near 50% on the cores when gaming then it's doing too much to get the instructions to the GPU in a regular enough manner to feel smooth.

AS cjdavid says above, I found a big upgrade from 3770k to a modern processor (AMD 3800k). and it wasn't so much the max or avg FPS that makes the difference but the change in the 1% fps lows that you can 'feel' the difference in. You want to be GPU bound and your GPU can show in the 85-100% range on your current CPU. Change to a modern processor and gpu load will be consistently 98-100%.

I was skeptical and an advocate of this thread only considering MAX and avg FPS results and the uplift doesn't look worth it. But I changed to a modern processor and I guess to a fair extent some of the performance is down to the RAM too. And this really gives a performance boost to how your current GPU will work. Much smoother and an experience you can 'feel'. If your current CPU is anywhere near 50% on the cores when gaming then it's doing too much to get the instructions to the GPU in a regular enough manner to feel smooth.

You may think a 10% increase in max fps isn't worth the cost of a platform upgrade, but it's the 1% lows that make the difference.

think my 2600k is nearing the end,

I'm in the same boat.

Had my 2600k and P67 mobo for nine years now, running on a mild 4Ghz all-core overclock.

Managed to fry my motherboard the other week so have now cannibalised an H77 from elsewhere so I can't even overclock the CPU anymore.

I'm running a 1080Ti on an ultrawide and the CPU is starting to become a serious bottleneck in most games, with very few pushing the GPU past around 60% before the CPU tops out.

I know I need to upgrade pronto but I'm hanging on for Comet Lake. I've discounted Ryzen (don't go there fanboys, I'm not going to change my mind) as Intel are still faster for gaming (which is the only demanding task I run) and the fans on the X570 boards are a major blocker for me too.

I know Comet Lake isn't going to offer much benefit over the current 9th gen but it just seems daft to upgrade now when the next gen is only a couple of months away (hopefully).

I'm in the same boat.

Had my 2600k and P67 mobo for nine years now, running on a mild 4Ghz all-core overclock.

Managed to fry my motherboard the other week so have now cannibalised an H77 from elsewhere so I can't even overclock the CPU anymore.

I'm running a 1080Ti on an ultrawide and the CPU is starting to become a serious bottleneck in most games, with very few pushing the GPU past around 60% before the CPU tops out.

I know I need to upgrade pronto but I'm hanging on for Comet Lake. I've discounted Ryzen (don't go there fanboys, I'm not going to change my mind) as Intel are still faster for gaming (which is the only demanding task I run) and the fans on the X570 boards are a major blocker for me too.

I know Comet Lake isn't going to offer much benefit over the current 9th gen but it just seems daft to upgrade now when the next gen is only a couple of months away (hopefully).

its a tough one, the sandy chips have been legendary as you say 8/9 years and still cut the mustard. i'm fortunate enough mines is sitting currently at 4.8ghz under water so isnt really a major bottleneck yet but when you start looking at £85 cpus and see them as beating yours its time to look at moving on,

Cinebench shows theirs at literally 4x the raw performance of mine (at stock).

Cinebench shows theirs at literally 4x the raw performance of mine (at stock).cant bear to part with it, it might just have to get moved under the stairs and a pile of storage attached as a plex server

Max fps is something what shouldn't even be mentioned anywhere.AS cjdavid says above, I found a big upgrade from 3770k to a modern processor (AMD 3800k). and it wasn't so much the max or avg FPS that makes the difference but the change in the 1% fps lows that you can 'feel' the difference in. You want to be GPU bound and your GPU can show in the 85-100% range on your current CPU. Change to a modern processor and gpu load will be consistently 98-100%.

There's only certain amount of memory speed any particular CPU can use with full effect.its going to come from the change to DDR4 and a NVME drive rather than the cpu being any better

Only next-gen from which we have any idea of release is Zen3.I know Comet Lake isn't going to offer much benefit over the current 9th gen but it just seems daft to upgrade now when the next gen is only a couple of months away (hopefully).

You'll then also have the usual limited availability of the high-end models for the first few months. It could be the better part of a year before you can get your hands on a 10700/10900, so you should consider whether you'd rather upgrade now to a 9700/9900 and enjoy the games that you're currently playing more.I know Comet Lake isn't going to offer much benefit over the current 9th gen but it just seems daft to upgrade now when the next gen is only a couple of months away (hopefully).

its a tough one, the sandy chips have been legendary as you say 8/9 years and still cut the mustard. i'm fortunate enough mines is sitting currently at 4.8ghz under water so isnt really a major bottleneck yet but when you start looking at £85 cpus and see them as beating yours its time to look at moving on,

cant bear to part with it, it might just have to get moved under the stairs and a pile of storage attached as a plex server

I am truly starting to believe that the various products including the Xeon replacement for a i7 920 I am running are still competitive and lasted so long in games is purely down to intel not pushing the envelope at all.

I feel that AMD creating product for the next generation of console is actually what has brought us forward, as Intel was happy to plod along doing nothing, without innovation the gaming industry didn't really change, and forced any upgrades it created purely on the basis of GPU updates, when SO MUCH MORE was capable.

Intel need punished badly for this, I'd suggest steering away for any Intel chip until a coulee of generations time, when they have created something innovative, rather than reactive, and actually generate competition.

Max fps is something what shouldn't even be mentioned anywhere.

Also average isn't that usefull without including amount of variation, especially that lower end.

Years ago in Finnish PC forum few users with both 6 core previous gen workstation platform and "faster" 4 core Skylake said that in more demanding games that 6 core CPU gave smoother gameplay.

No doubt because of "less valleys between high spots".