Bluedot55 ran both DLSS and third party scalers on an

Nvidia RTX 4090 and measured Tensor core utilisation. Looking at average Tensor core usage, the figures under DLSS were extremely low, less than 1%.

Initial investigations suggested even the peak utilisation registered in the 4-9% range, implying that while the Tensor cores were being used, they probably weren't actually essential. However, increasing the polling rate revealed that peak utilisation is in fact in excess of 90%, but only for brief periods measured in microseconds.

When you think about it, that makes sense. The upscaling process has to be ultra quick if it's not to slow down the overall frame rate. It has to take a rendered frame, process it, do whatever calculations are required for the upscaling, and output the full upscaled frame before the 3D pipeline has had time to generate a new frame.

So, what you would expect to find is exactly what Bluedot55 observed. An incredibly brief but intense burst of activity inside the Tensor cores when DLSS upscaling is enabled.

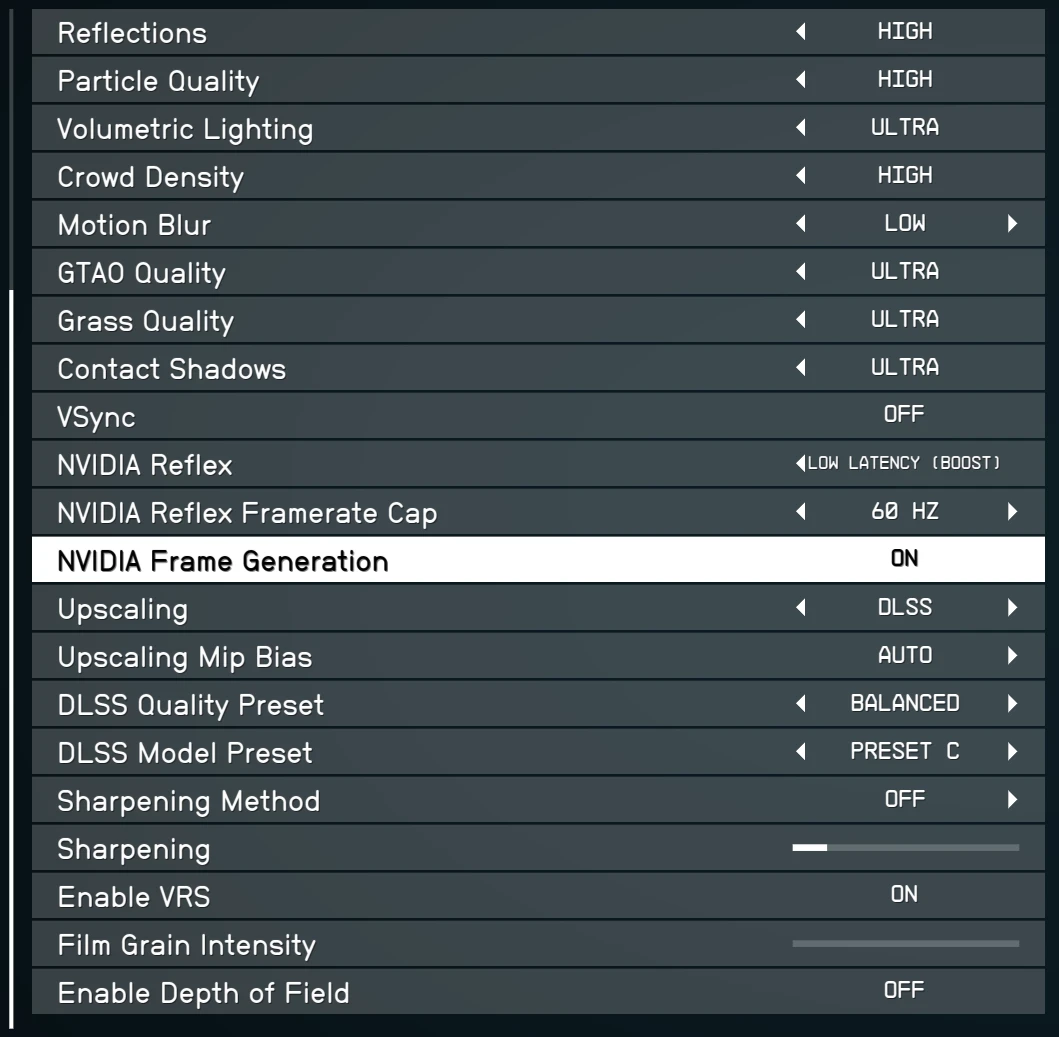

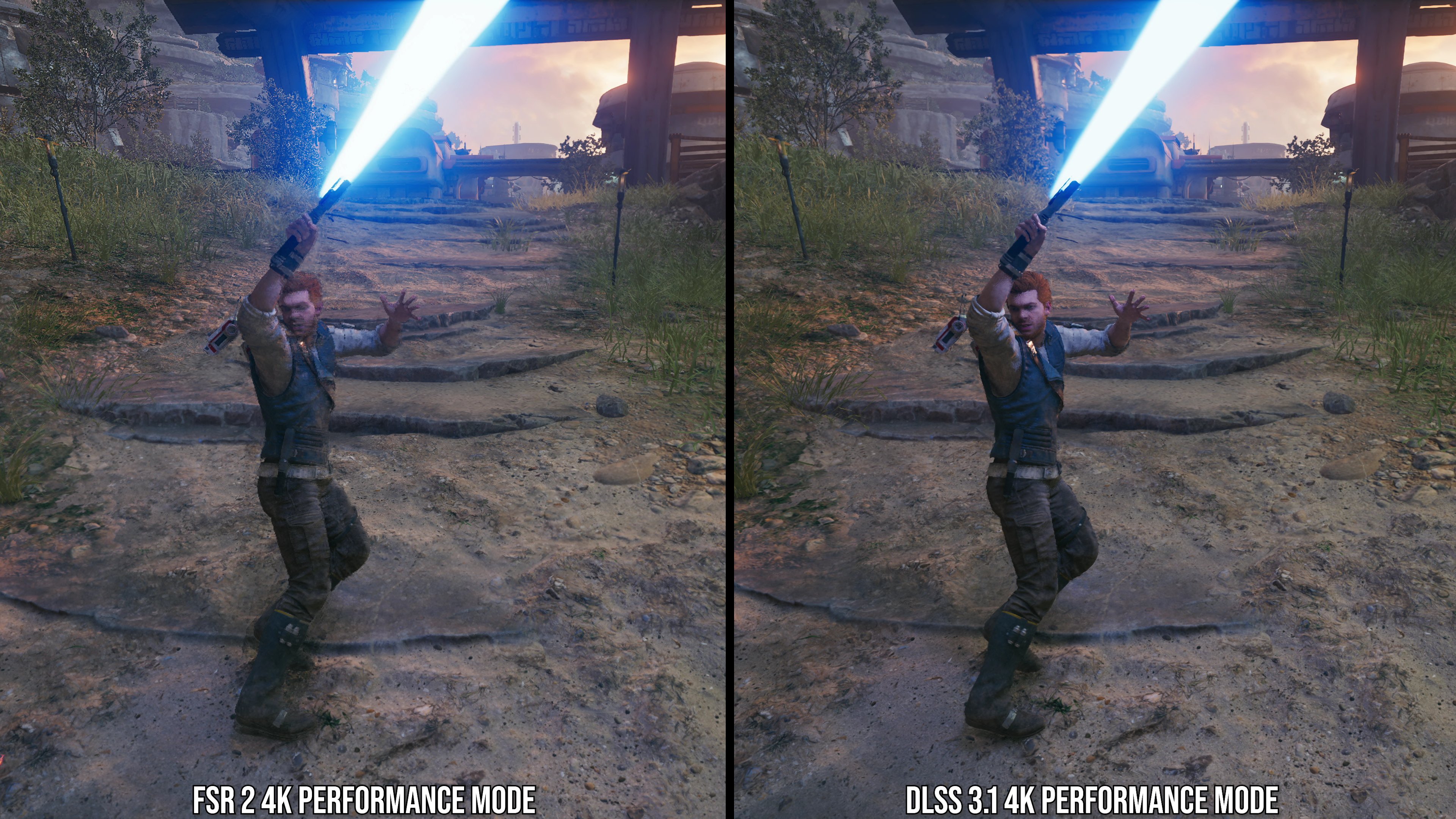

Of course, Nvidia's GPUs have offered Tensor cores for three generations and you have to go back to the GTX 10 series to find an Nvidia GPU that doesn't support DLSS at all. However, as Nvidia adds new features to the DLSS overall superset, such as Frame Generation, newer hardware is being left behind.

What this investigation shows is that while it's tempting to doubt Nvidia's motives whenever it seeming locks out older GPUs from a new feature, the reality may be simply be that the new GPUs can do things old ones can't. That's progress for you

steamcommunity.com

steamcommunity.com

I'm 99% sure the main game he was referring to was boundary as that game was shown to be running dlss and ray tracing before amd swooped in and all nvidia tech got removed......

I'm 99% sure the main game he was referring to was boundary as that game was shown to be running dlss and ray tracing before amd swooped in and all nvidia tech got removed......

)

)