Which one is that?

I am hardly gaming on the PC at the moment so I am fine with what I got. Will get harder in April as games I want to play start coming out then. But I refuse to buy old tech knowing it is not that far away. I will keep what I got until 2021 if I have to and use my PS4 Pro!

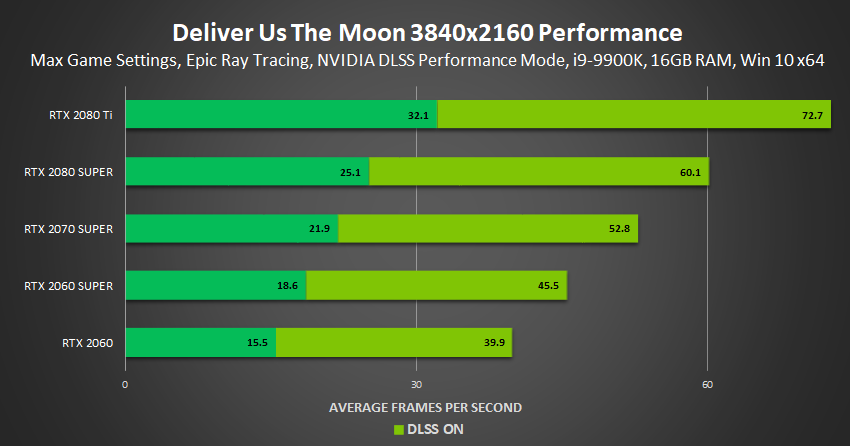

Really hope the 3070 matches or within reach of 2080Ti performance and has much better RT performance as this gen has been ****.

Whatever is around when I actually NEED to upgrade. Probably won't be by next gen.